Island Grid BESS: Full Engineering Guide 2026 (Design & Sizing)

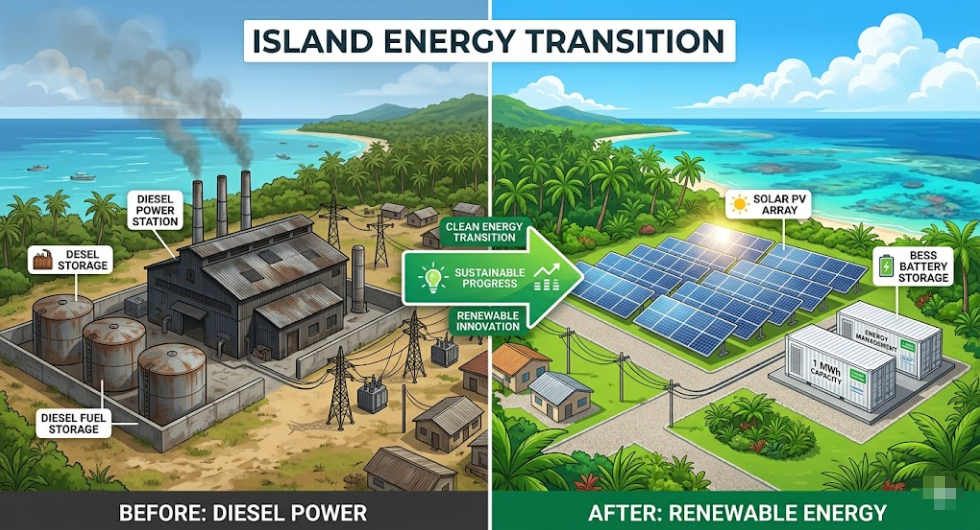

Deploying an Island Grid BESS is the definitive technology fixing one of the most overlooked power problems in the world. More than 10,000 inhabited islands still run on diesel generators. Add remote mining camps, offshore platforms, and rural areas with no grid access — and the scale of the challenge becomes clear.

All of these locations share the same problem. They need a stable, reliable grid, but they have no utility to rely on. For decades, diesel was the only answer. Today, in 2026, Island Grid BESS is replacing diesel as the backbone technology. It does so faster, more reliably, and at a lower lifetime cost.

This guide covers everything you need. It explains how Island Grid BESS works and how it differs from standard storage. It also shows you how to size a system, which control architecture to pick, and how to build a strong financial case.

📌 QUICK DEFINITION

What is Island Grid BESS?

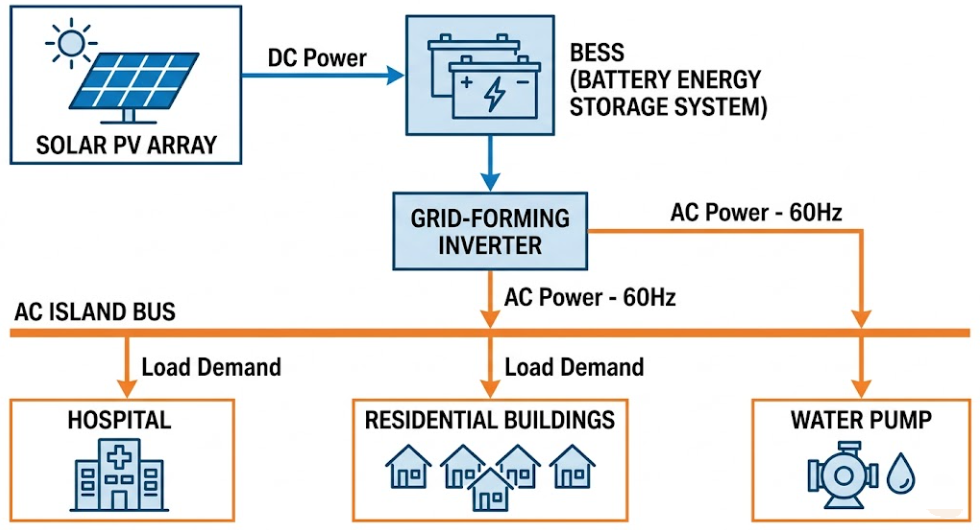

Island Grid BESS is a Battery Energy Storage System that acts as the main voltage and frequency source on an isolated network. It has no connection to a utility grid. Unlike a grid-connected BESS that follows an existing grid signal, an Island Grid BESS creates the grid itself. It keeps power stable for all loads using stored energy, renewables, or both.

Table of Contents

- 01 — Why Island Grids Are a Different Engineering Problem

- 02 — Island Grid BESS vs Grid-Connected BESS: Core Differences

- 03 — The Four Critical Functions of Island Grid BESS

- 04 — Control Architecture: Why Island Grids Need Grid-Forming BESS

- 05 — Island Grid BESS Sizing: A Four-Step Method

- 06 — Battery Chemistry: Why LFP Dominates Island Grid BESS in 2026

- 07 — Solar-Plus-BESS Island Grid Architecture

- 08 — Wind-Plus-BESS Island Grid Architecture

- 09 — Diesel Hybrid Island Grids: The Three-Phase Transition Path

- 10 — Real-World Island Grid BESS Case Studies

- 11 — Island Grid BESS Sizing Reference Table

- 12 — Financial Case: Island Grid BESS vs Diesel Over 25 Years

- 13 — Key Technical Challenges and Practical Solutions

- 14 — Frequently Asked Questions

- What is Island Grid BESS and how does it differ from standard BESS?

- Can a grid-following BESS be used on an island grid?

- How many hours of storage does an Island Grid BESS need?

- What battery chemistry is best for Island Grid BESS?

- How does Island Grid BESS handle a complete power failure?

- Can renewable energy cover 100% of an island's power needs with Island Grid BESS?

- What does an Island Grid BESS project typically cost?

- 15 — Related Articles on SunLith Energy

- External References

01 — Why Island Grids Are a Different Engineering Problem

A standard grid-connected BESS has a utility grid behind it as backup. If renewable generation drops or demand spikes, the utility absorbs the imbalance. Frequency and voltage stay stable because thousands of generators share the load.

Island grids, however, have none of that.

No Backup, No Room for Error

On an island grid, every watt consumed must be generated or discharged locally. There is no utility to fill the gap. When a cloud shadow crosses a solar array, the BESS must respond in milliseconds. When a pump starts, the island grid must match that load instantly.

This is why Island Grid BESS is a different engineering discipline. The physics are harder. The control requirements are stricter. Also, the cost of failure is much higher — a blackout means the entire island or facility loses power.

The Good News: The Technology Has Matured Fast

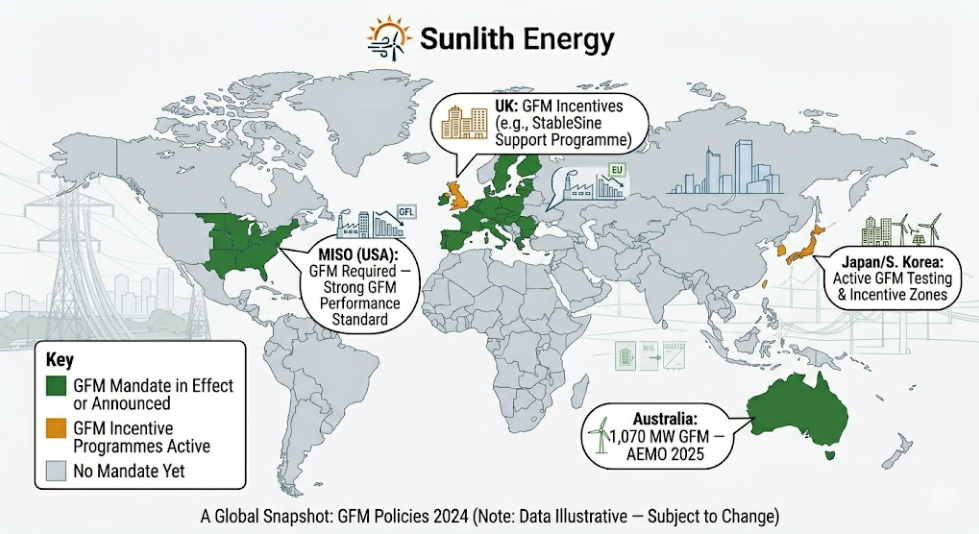

Despite those challenges, Island Grid BESS technology has improved a great deal since 2022. Systems now running on remote islands in Australia, the Pacific, and Scandinavia are hitting 99.98% availability. That figure is better than the diesel generators they replaced.

02 — Island Grid BESS vs Grid-Connected BESS: Core Differences

The difference between these two systems matters greatly for engineering and procurement. The table below shows the ten most important distinctions.

| Dimension | Grid-Connected BESS | Island Grid BESS |

|---|---|---|

| Voltage reference | Utility grid provides it | BESS creates it internally |

| Inverter control mode | Grid-following (GFL) | Grid-forming (GFM) required |

| Frequency regulation | Supports grid frequency | IS the frequency — no backup |

| Black start | Not typically required | Mandatory |

| Fault current | Utility provides it | BESS must supply it |

| Spinning reserve | Not required | Required at all times |

| Load sensitivity | Low — utility absorbs swings | High — every load step must be matched |

| Renewable integration | Flexible | Precise EMS essential |

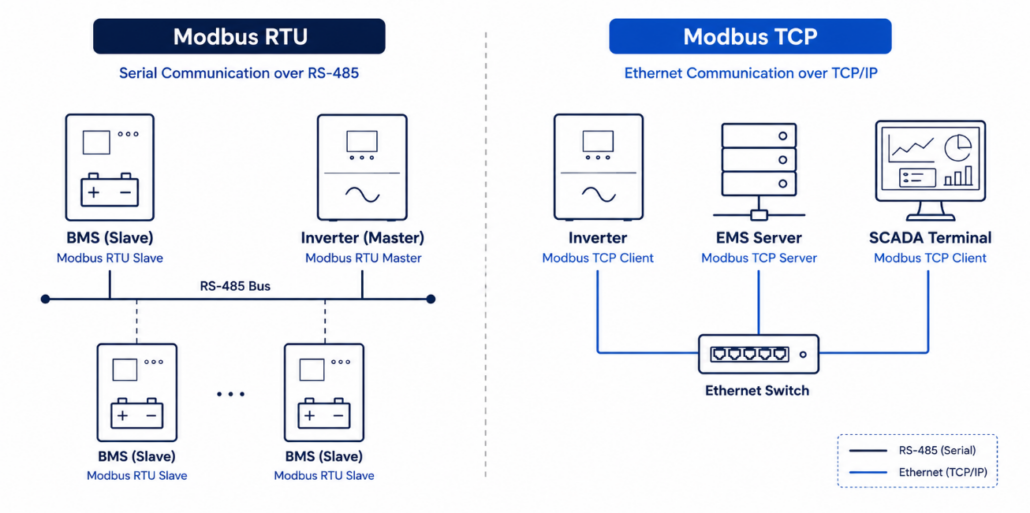

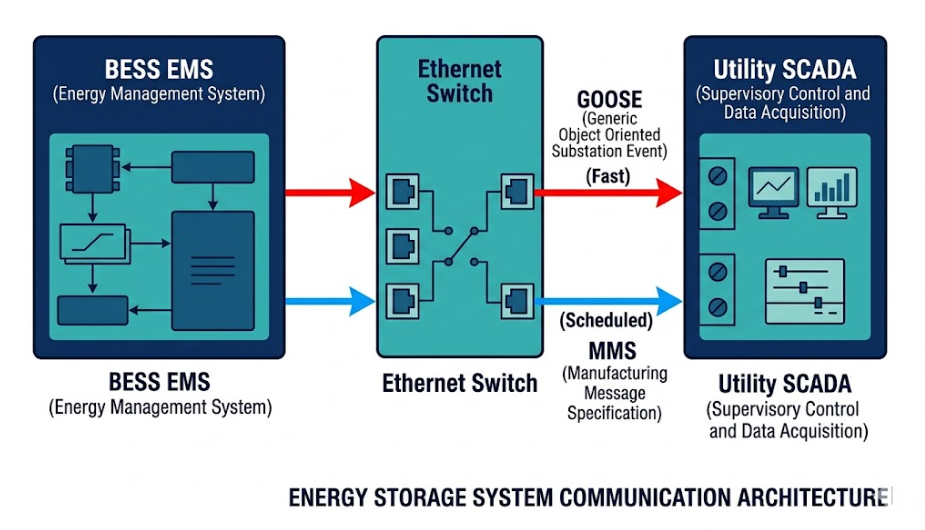

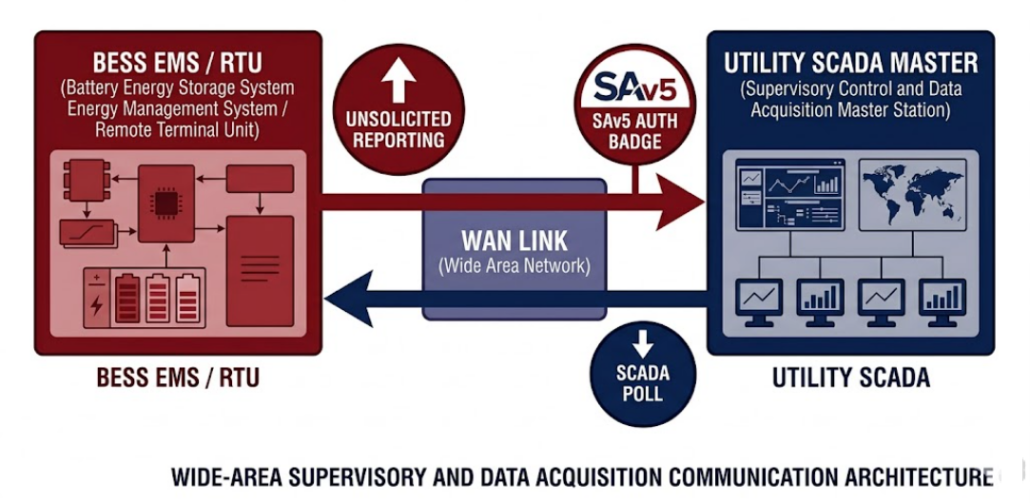

| Comms loss tolerance | High | Low — latency affects stability |

| Design complexity | Moderate | High — full power system design needed |

In short: a grid-connected BESS follows the grid. An Island Grid BESS is the grid.

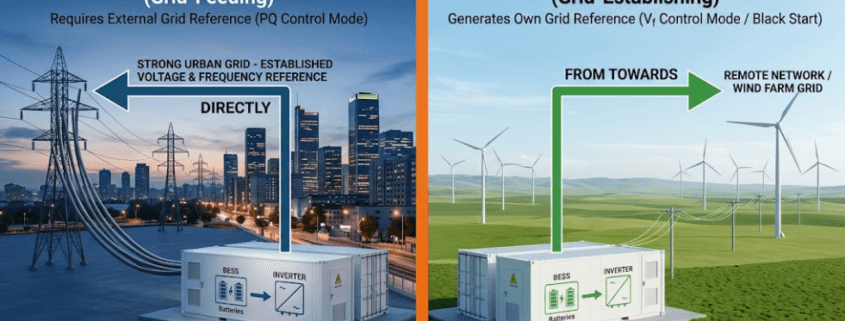

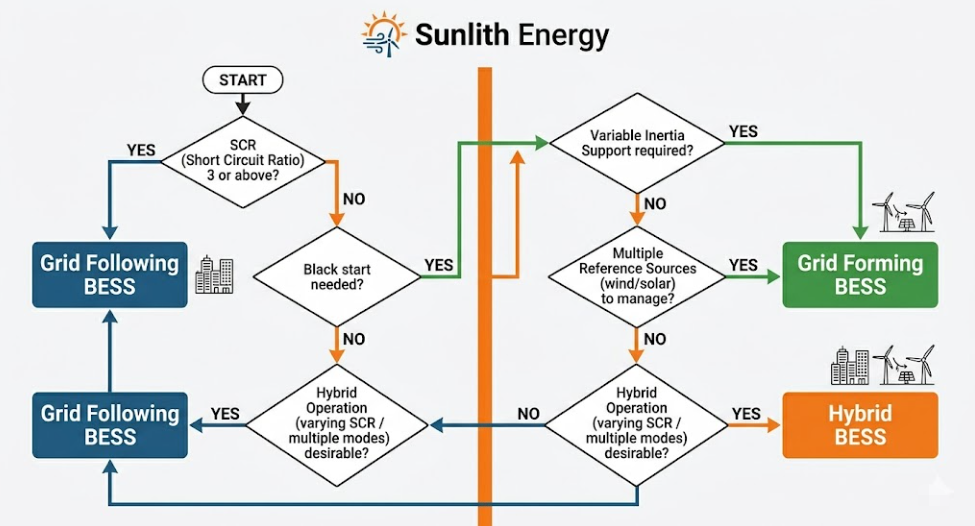

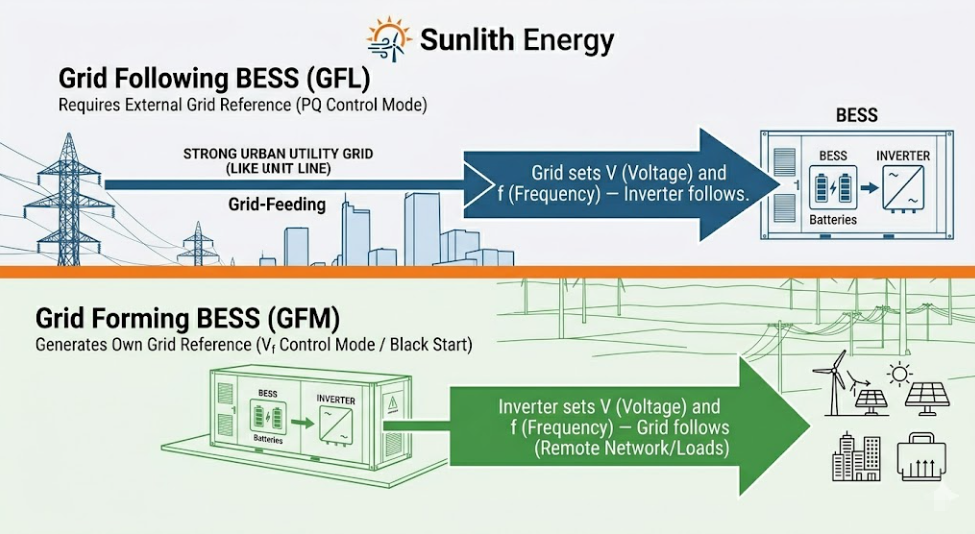

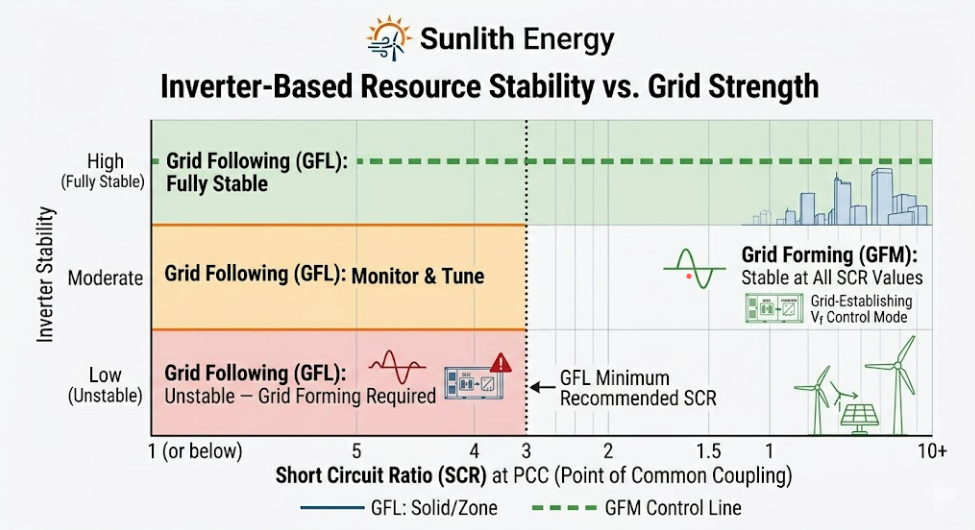

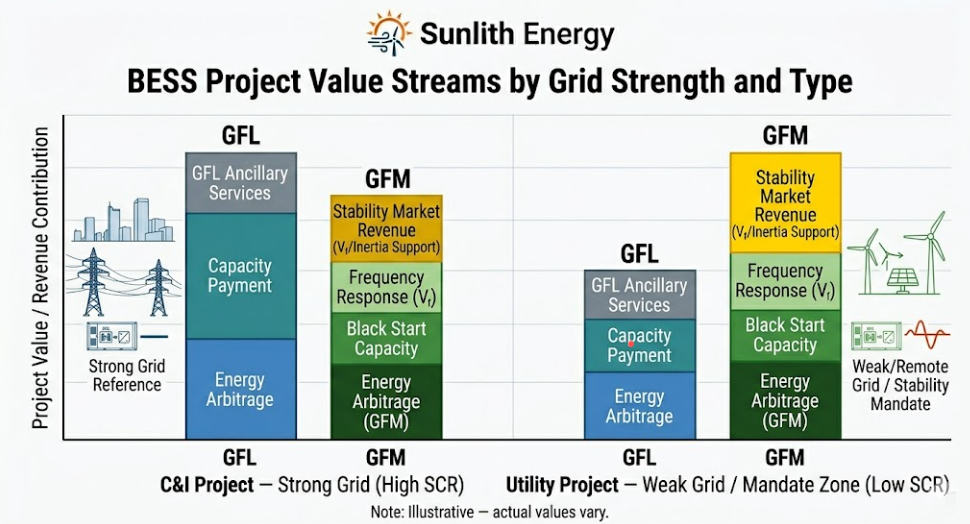

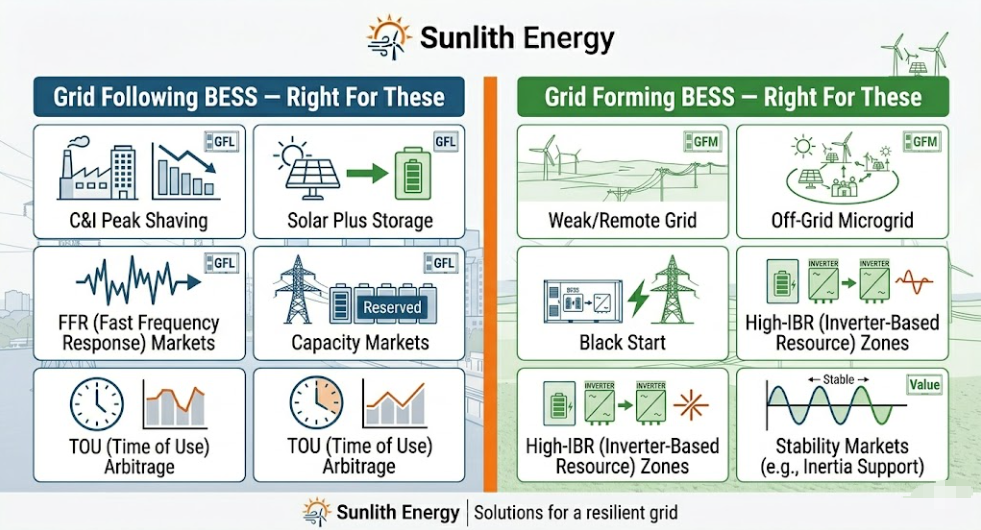

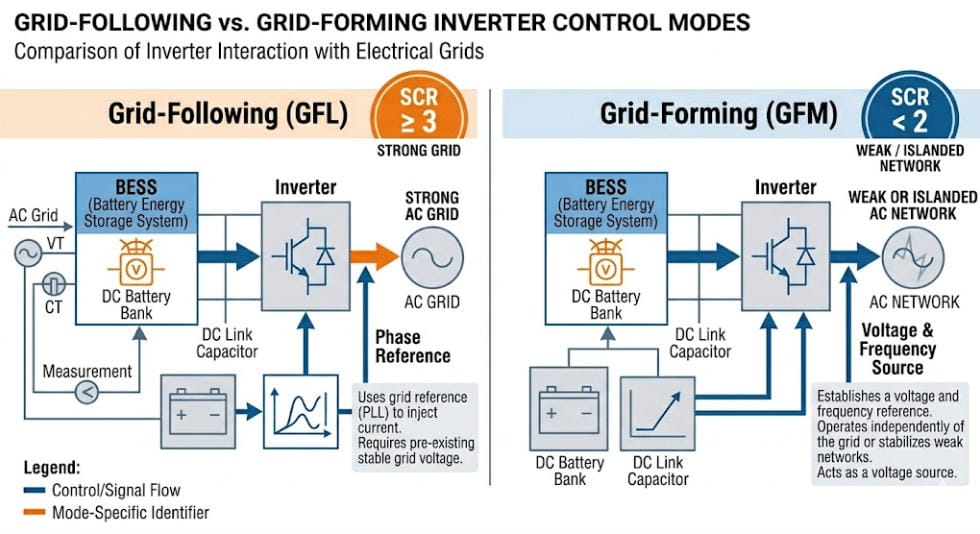

For the full breakdown of inverter control modes, see our guide to grid-forming vs grid-following BESS.

03 — The Four Critical Functions of Island Grid BESS

A well-designed Island Grid BESS must carry out four functions at the same time. These are not extras — they are core requirements.

Function 1 — Voltage and Frequency Formation

The BESS inverter must create a stable AC voltage — typically 50 Hz or 60 Hz — with no external signal to copy. This is the grid-forming function. Without it, nothing on the island can run. That is why grid-forming BESS technology is the baseline spec for any Island Grid BESS project.

Function 2 — Real-Time Power Balance

At every moment, generation must equal consumption. When solar output falls due to cloud, the BESS must discharge the difference right away. When a load switches off, the BESS must absorb the surplus. Otherwise, frequency drifts and the grid becomes unstable.

Function 3 — Energy Shifting and Overnight Supply

Beyond second-by-second balancing, the BESS must also store enough energy to carry the island through long periods of zero generation. In a solar-only system, that means overnight. In a wind-heavy setup, it can mean multi-day low-wind periods. This need drives the MWh capacity spec — which is separate from the MW power spec.

Function 4 — Black Start and Grid Restoration

If the island grid goes down — due to a fault, a protection trip, or a battery shutdown — the BESS must restart the entire network with no outside help. This black start capability is a must-have for Island Grid BESS. A standard grid-following inverter cannot do it.

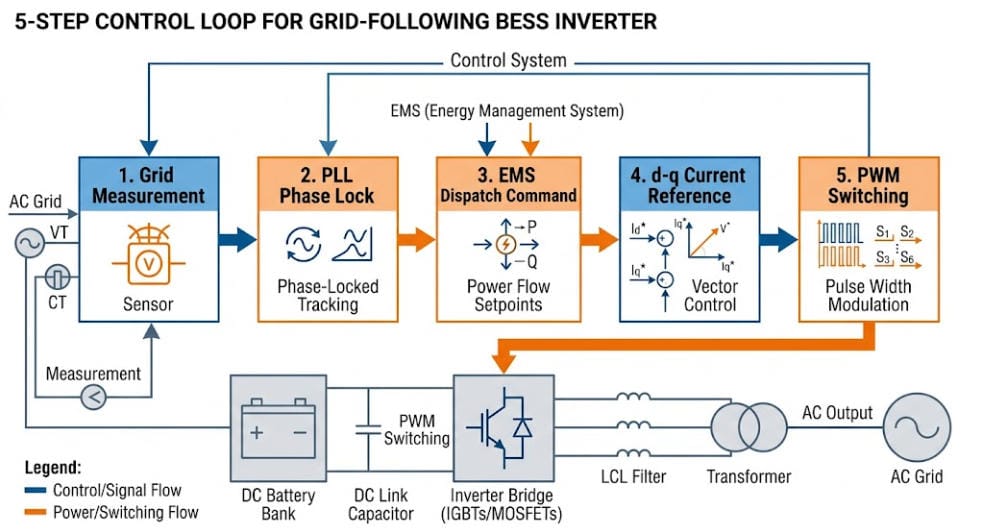

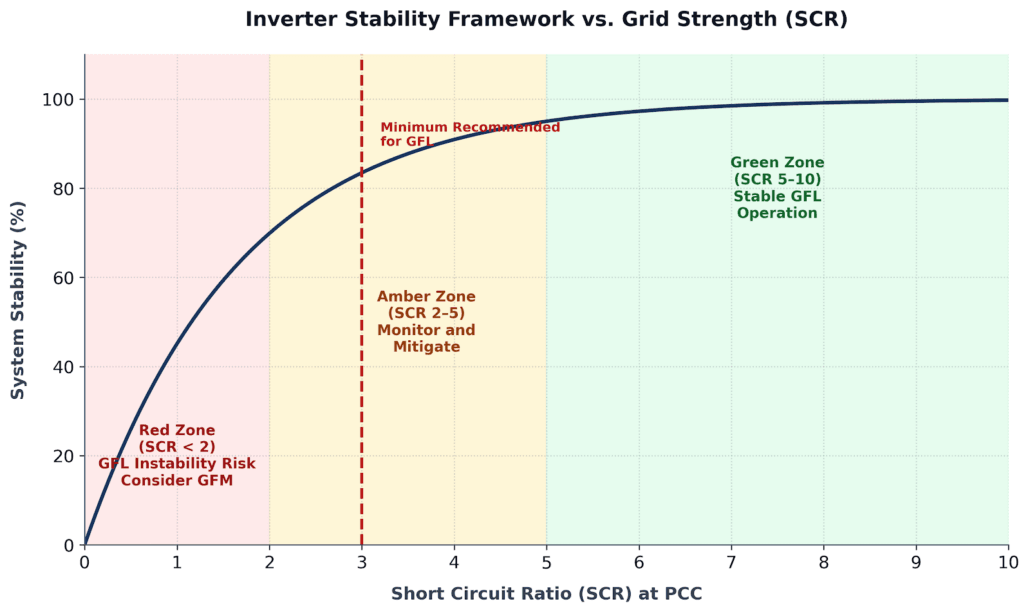

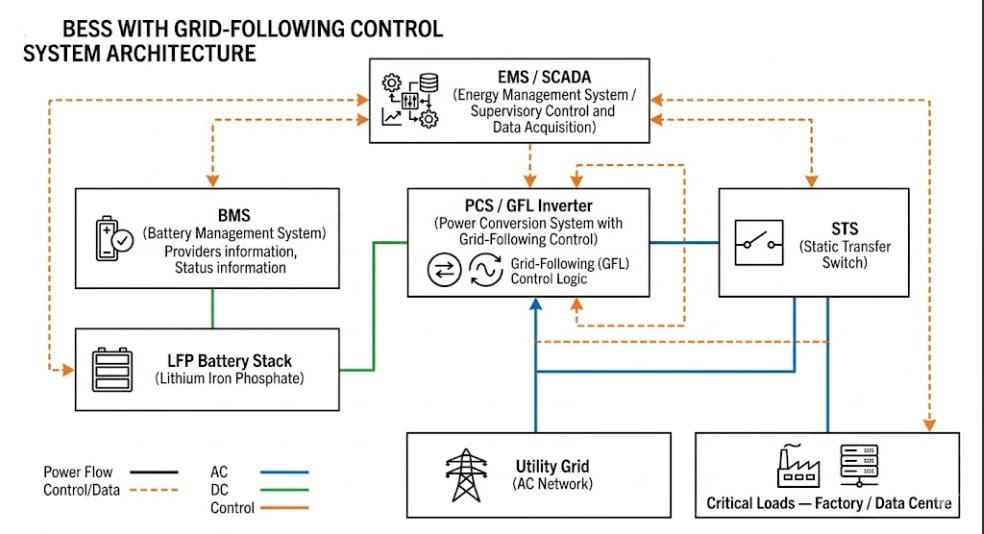

04 — Control Architecture: Why Island Grids Need Grid-Forming BESS

This is the area where most Island Grid BESS projects go wrong. The mistake often shows up late — at commissioning — and it is expensive to fix.

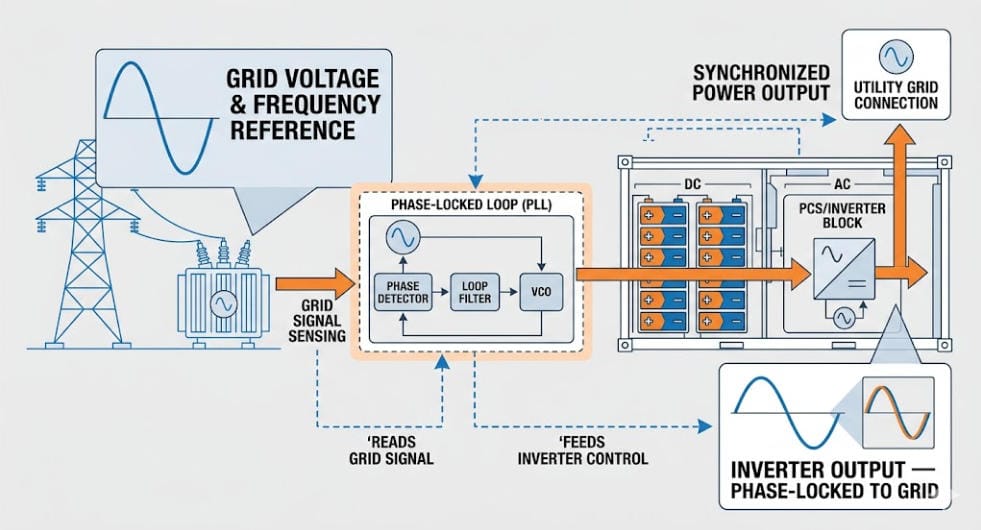

Why Grid-Following Inverters Fail Alone on an Island

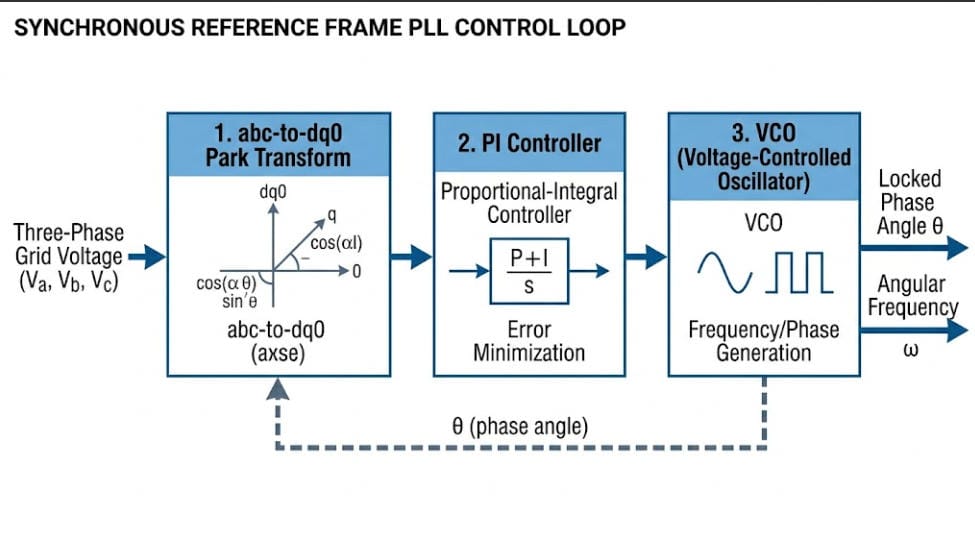

A grid-following BESS uses a Phase-Locked Loop (PLL) to lock onto an existing grid voltage signal. If there is no grid signal — which is always the case at black start — the PLL has nothing to lock to. As a result, the inverter shuts down.

For a grid-connected project, this is fine. The utility is always there as a backup. For an Island Grid BESS, however, there is no utility. The battery is the only power source. So a grid-following inverter alone is not suitable.

Grid-Forming Control: The Right Architecture for Island BESS

A grid-forming inverter creates its own internal voltage and frequency reference. Everything else on the network — loads, other inverters, generators — then syncs to that reference. Because of this, it can:

- Black-start a fully de-energised island network

- Hold stable frequency with no external signal

- Respond to load steps in milliseconds — far faster than a PLL-based inverter

- Keep running during faults that would trip a grid-following inverter

Three Control Strategies: Which One to Specify?

Choosing the right strategy depends on your island’s size, renewable mix, and load profile. Here is how the three main options compare.

Droop Control is the simplest option. It mimics a generator’s governor — it adjusts power output in line with frequency changes. Droop control works well for smaller islands with stable loads and modest renewable penetration.

Virtual Synchronous Generator (VSG) goes further. It copies the inertial response of a real synchronous generator. It reacts to both frequency deviation and Rate of Change of Frequency (ROCOF). Because of this, it works best on islands with high renewable penetration, where frequency can shift fast. Moreover, it replicates the behaviour that protection systems were designed around when diesel was the primary source.

Power Synchronisation Control (PSC) is the most advanced option. Instead of using frequency as the sync signal, it uses active power. This makes it the most stable choice for very weak or very small island grids — especially where the Short Circuit Ratio (SCR) falls below 1.5.

For most Island Grid BESS projects, VSG mode is the best default. It mimics diesel generator behaviour closely, so commissioning and protection coordination are simpler.

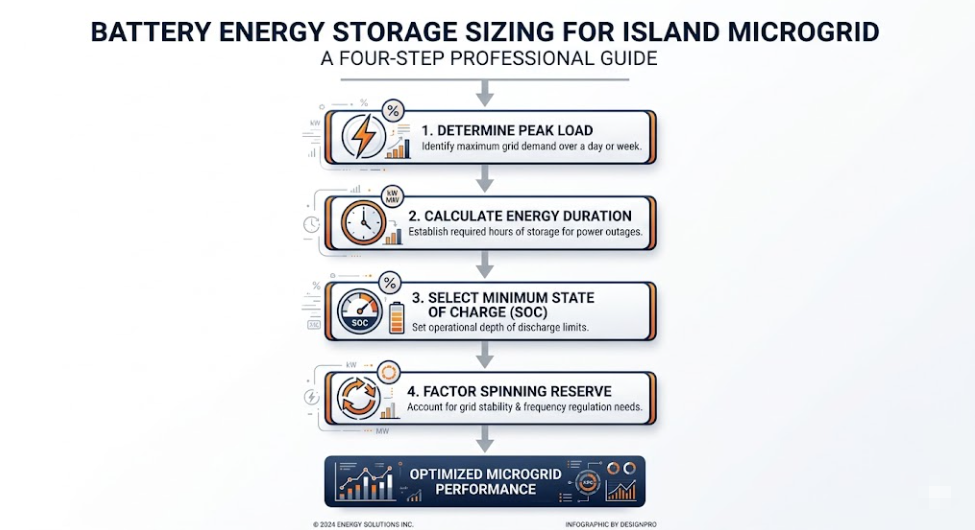

05 — Island Grid BESS Sizing: A Four-Step Method

Sizing an Island Grid BESS involves two dimensions: power capacity (MW or kW) and energy duration (MWh or kWh). Getting either one wrong causes serious operational and financial problems down the line.

Step 1 — Establish Peak Load and Load Profile

First, the BESS must meet peak demand with room to spare. A standard design rule is to size BESS power at 120–130% of peak island load. That extra headroom is your spinning reserve — the buffer that stops frequency from collapsing when demand spikes.

Example: An island with 500 kW peak demand needs a BESS rated at 600–650 kW minimum.

Step 2 — Determine Energy Duration Requirements

Next, consider how long the BESS must run on stored energy alone. For a solar-only island, that is typically 10–14 hours overnight. For a mixed solar-wind island, it can stretch to 48–72 hours during low-generation periods.

Design rule: Size the BESS to carry 100% of average island load through the worst-case zero-generation window. Then add a 20% safety margin on top.

Worked example — solar-only island, 200 kW average load, 12-hour overnight period:

- Base energy: 200 kW × 12 h = 2,400 kWh

- Plus 20% margin: 2,400 × 1.2 = 2,880 kWh usable

- Adjusted for LFP 90% DoD: 2,880 ÷ 0.90 = 3,200 kWh nameplate

Step 3 — Define State of Charge Operating Bands

Unlike a grid-connected BESS, Island Grid BESS has no utility backup if the battery runs low. SoC management must therefore be strict:

- Minimum SoC: 20% — load shedding starts below this point

- Maximum SoC: 95% — renewable generation is curtailed above this level

- Normal cycling band: 20–95%

- Emergency reserve: Keep 10% SoC set aside exclusively for black-start restoration

Step 4 — Define Spinning Reserve Allocation

Finally, set your spinning reserve. This is the share of BESS capacity that stays ready but does not discharge. It must be large enough to cover the biggest single generation loss on the island without letting frequency fall below relay trip thresholds.

Rule of thumb: Spinning reserve ≥ the rated output of the largest single renewable unit on the island.

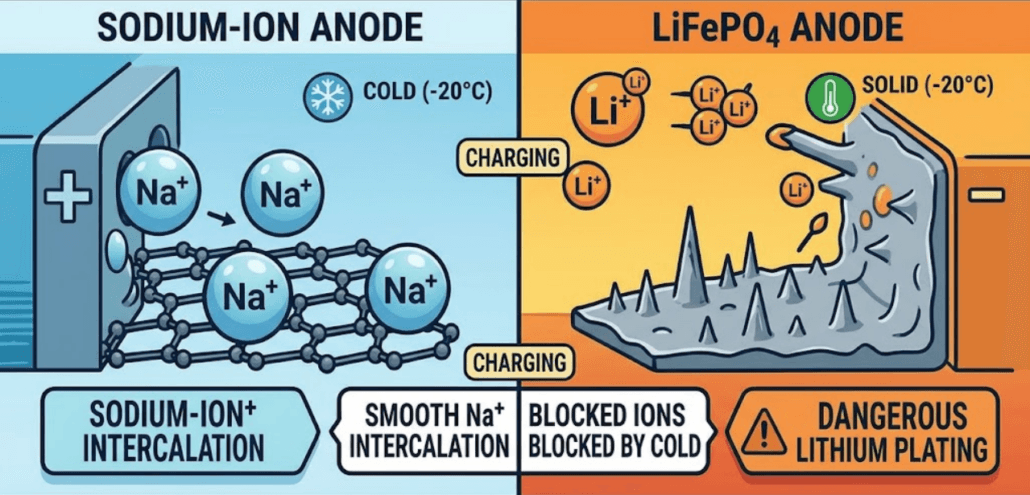

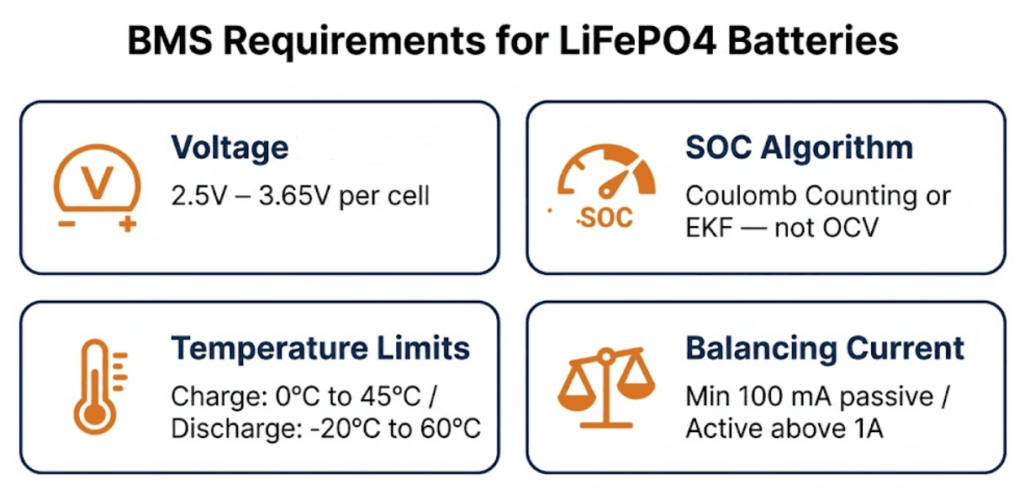

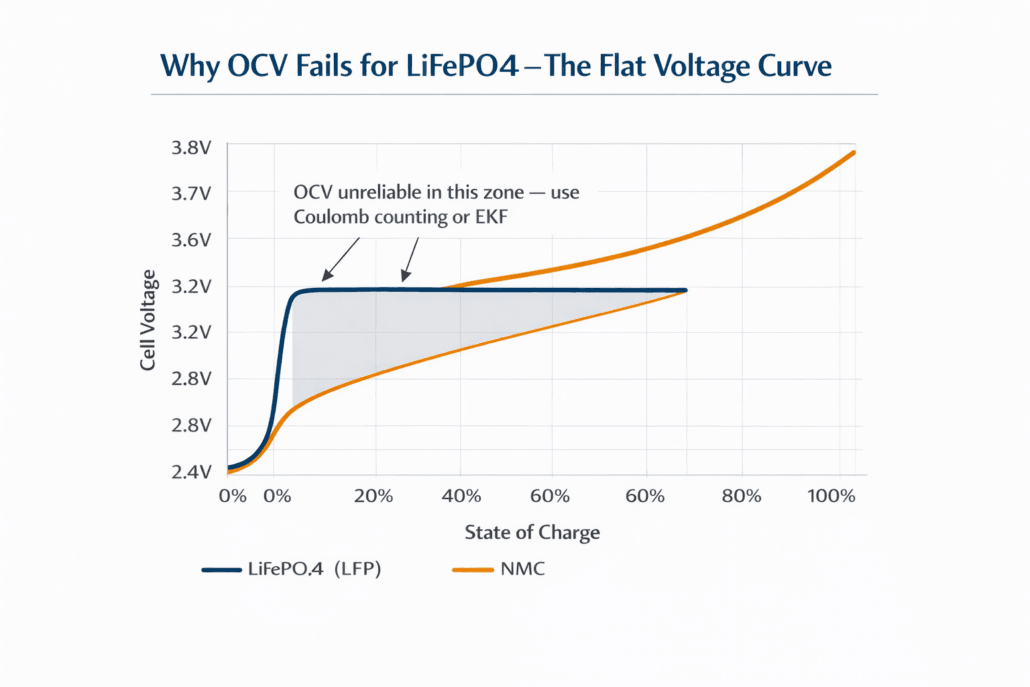

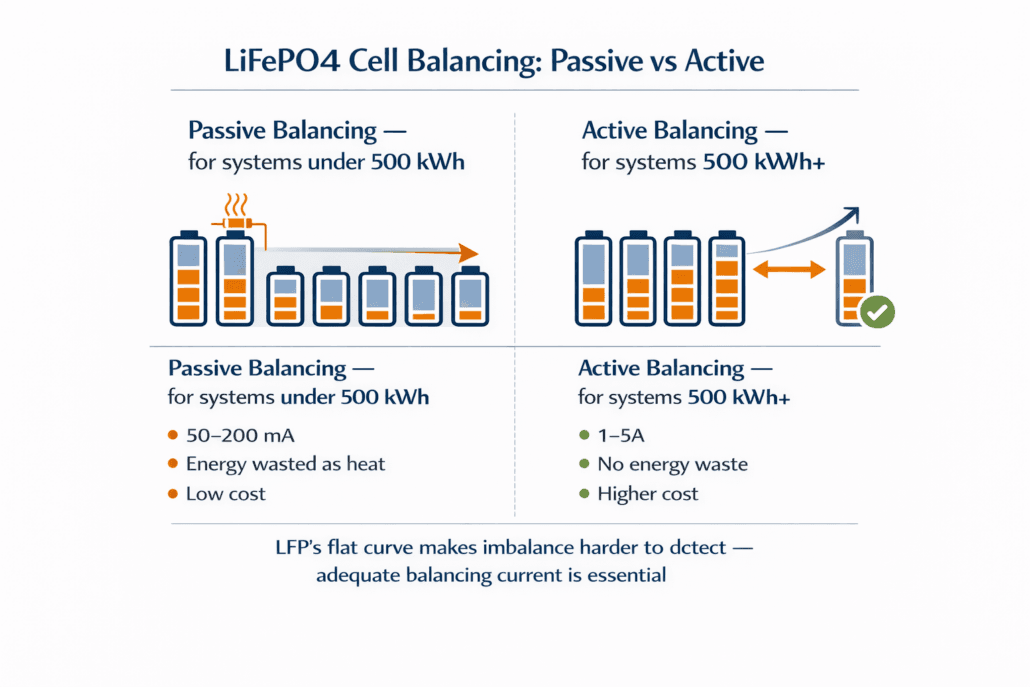

06 — Battery Chemistry: Why LFP Dominates Island Grid BESS in 2026

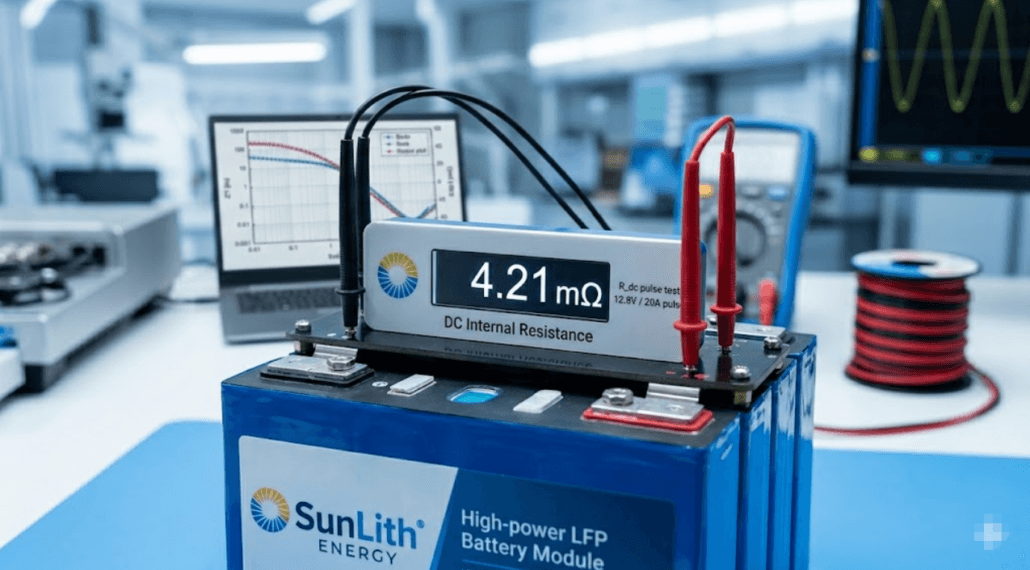

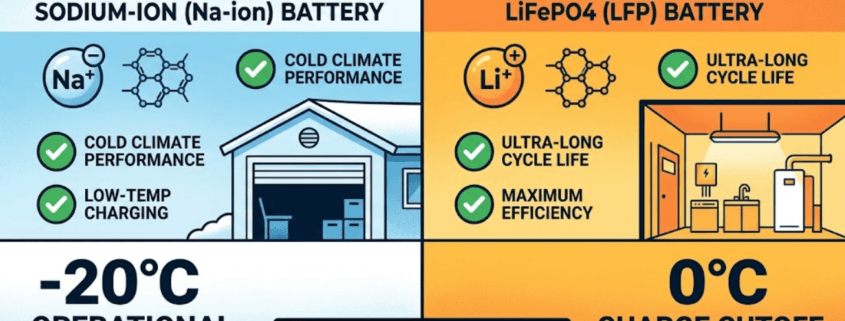

Battery chemistry for Island Grid BESS has largely settled on one answer. As of 2026, Lithium Iron Phosphate (LFP) accounts for about 95% of new island grid BESS procurement globally. That figure comes from BloombergNEF and IEA tracking data. The reasons make sense for island grid conditions specifically.

Why LFP Wins for Island Grid BESS

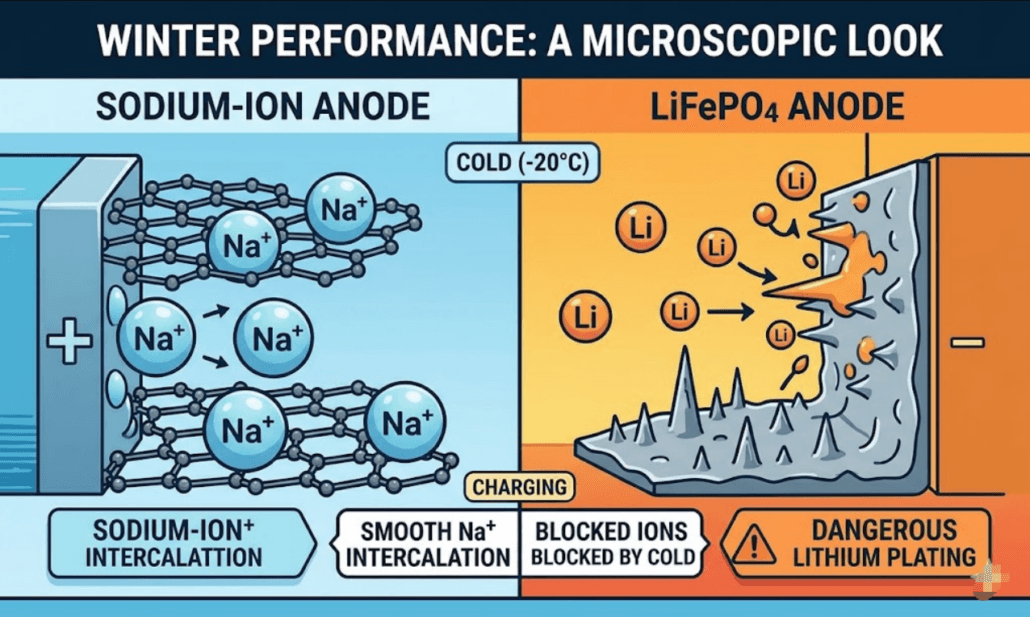

Thermal stability is the top reason. Many island grid sites sit in tropical climates where ambient temperatures exceed 40°C. LFP cells have a thermal runaway threshold of around 270°C. NMC cells, by contrast, run into trouble at 150–180°C. Furthermore, LFP releases far less heat if a cell does fail. In a remote location where fire response is slow, that difference is critical.

Cycle life is the second major factor. Island Grid BESS systems cycle daily, often deeply. LFP cells rated for 4,000–6,000 full cycles at 80% DoD give 10–15 years of service before capacity augmentation is needed. NMC degrades faster under the same conditions.

Cost per cycle has also shifted in LFP’s favour. LFP manufacturing capacity expanded a great deal between 2022 and 2025. As a result, prices dropped, and the per-cycle economics are now clearly better for high-cycle island grid use.

Simpler thermal management is a practical bonus. LFP is less sensitive to temperature than NMC. Therefore, the HVAC system can be simpler — an advantage on remote islands where air conditioning maintenance is hard to schedule.

The one exception: very space-constrained sites, such as offshore platforms, may justify NMC for its higher energy density per cubic metre. In all other island grid cases, however, LFP is the correct default.

07 — Solar-Plus-BESS Island Grid Architecture

Solar-plus-BESS is the most common Island Grid BESS setup. It also has the longest track record in the field. Solar PV replaces diesel as the primary energy source. The BESS then provides grid stability and overnight energy supply.

AC-Coupled vs DC-Coupled: Which Is Right for Your Project?

DC-coupled architecture links the solar array directly to the BESS DC bus via a charge controller. The solar array and battery share the same inverter. This approach captures energy before conversion losses. It also uses solar power that would otherwise be clipped and wasted. As a result, DC-coupled systems typically cut installed cost by 5–8% and improve overall round-trip efficiency.

AC-coupled architecture connects the solar inverter to the island AC bus. The BESS connects to the same bus through a separate inverter. This setup is more flexible. Existing diesel generators integrate more easily because they simply plug into the same AC bus. For this reason, AC-coupled is usually the better choice for retrofit projects.

In summary: use DC-coupled for greenfield Island Grid BESS projects with high solar penetration. Use AC-coupled when you are transitioning away from diesel and need to keep the generators running during the process.

Renewable Penetration Targets by Project Stage

| Renewable Penetration | BESS Configuration | Diesel Role |

|---|---|---|

| Up to 50% | BESS supports frequency; diesel is primary | Diesel runs continuously |

| 50–80% | BESS is primary; diesel backs up | Diesel starts on demand |

| 80–100% | BESS is sole grid-forming source | Diesel on emergency standby |

| 100% + storage | Full diesel replacement | Diesel removed or cold standby |

At 80–100% renewable penetration, grid-forming BESS technology becomes operationally essential. At that point, the diesel generator can no longer serve as the frequency reference.

08 — Wind-Plus-BESS Island Grid Architecture

Wind-plus-BESS island grids work differently from solar setups. In many island locations, they also perform better. Wind is not limited to daylight hours. Moreover, many islands have steady trade winds that deliver higher annual capacity factors than solar PV.

Three Unique Challenges of Wind-Plus-BESS Island Grids

Rapid generation variability is the first challenge. Wind output can shift a great deal within seconds due to gusts or direction changes. Consequently, the BESS must respond faster to wind variability than it typically does to solar variability. Solar output changes more gradually, except during sudden cloud shadow events.

Frequency interaction with wind turbines is the second challenge. Modern variable-speed wind turbines use power electronics interfaces. This makes them inverter-based resources (IBR) — not rotating machines with physical inertia. Therefore, when every generation source on the island is IBR, the Island Grid BESS must provide all synthetic inertia on its own. That is a harder job than in systems where some diesel generation is still running.

Extended low-wind periods are the third challenge. Unlike solar droughts, which reset each morning, wind droughts can run for multiple days. As a result, energy duration sizing for wind-plus-BESS island grids must account for multi-day low-generation periods. This pushes BESS capacity much higher than in equivalent solar designs.

For more on how inverter-based resources interact with Island Grid BESS, see our guide on grid-forming BESS technology and the grid-forming vs grid-following BESS comparison.

09 — Diesel Hybrid Island Grids: The Three-Phase Transition Path

Most Island Grid BESS projects in 2026 are not greenfield builds. Rather, they are retrofits of existing diesel-dependent island grids. Understanding the three phases of transition is therefore essential for developers and asset owners.

Phase 1 — Diesel-Dominant with BESS Support (0–40% Renewable)

In this first phase, diesel generators still provide the voltage and frequency reference. The BESS operates in grid-following mode. It handles peak shaving, frequency regulation, and spinning reserve. As a result, diesel runtime drops, fuel costs fall, and maintenance intervals lengthen. This phase only needs a grid-following BESS. It is also the simplest and cheapest entry point.

Typical outcomes: 20–35% diesel fuel reduction; 30–40% fewer generator starts.

Phase 2 — Diesel-Backup with BESS Primary (40–80% Renewable)

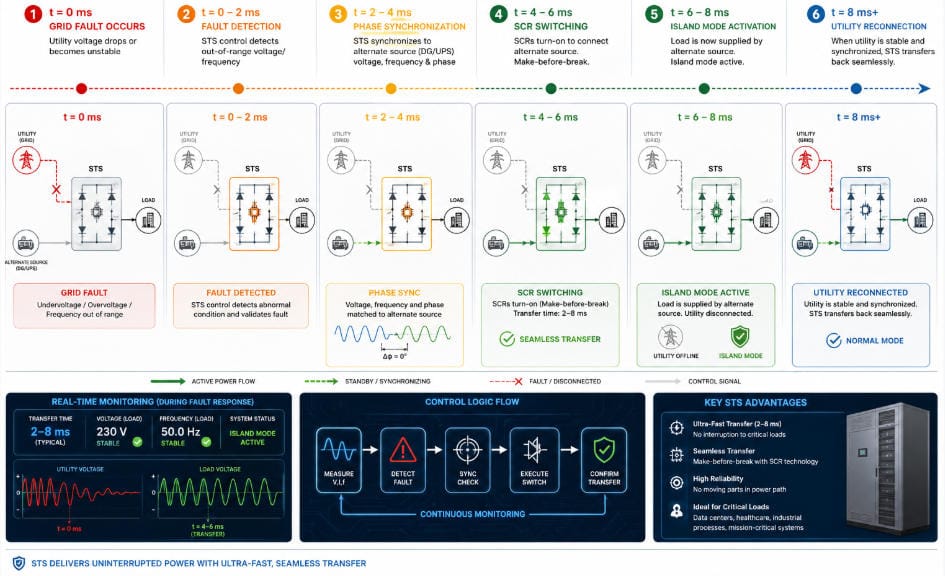

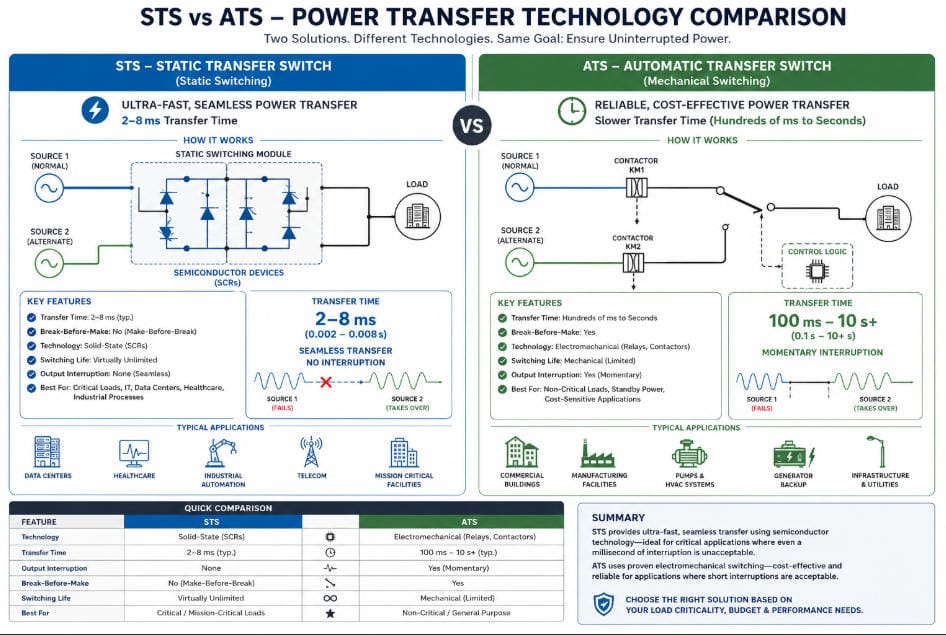

In this second phase, solar or wind capacity grows. The BESS then takes over as the main generation source for larger parts of each day. Diesel generators shift from continuous running to demand-start mode. At this stage, the BESS inverter must also be able to switch into grid-forming mode whenever the diesel is offline. This requires either a grid-forming capable inverter or a static transfer switch.

Typical outcomes: 50–70% diesel fuel reduction; diesel-on to diesel-off transitions in under 10 seconds.

Phase 3 — Full Diesel Replacement (80–100% Renewable)

In this third and final phase, diesel generators move to emergency-only standby or are removed. The Island Grid BESS runs continuously as the sole grid-forming source. Before commercial operation, the system needs full grid-forming BESS specification and comprehensive black start testing.

Typical outcomes: 85–95% diesel fuel reduction; full energy independence with diesel as last-resort backup only.

10 — Real-World Island Grid BESS Case Studies

Case Study 1 — El Hierro, Canary Islands (Spain)

El Hierro has run a wind-hydro-BESS hybrid island grid since 2014. Since then, it has steadily raised renewable penetration to above 90% for extended periods. The BESS absorbs wind variability and manages the link between turbines and pumped hydro storage. Peak demand on the island is about 7 MW. In short, El Hierro shows that 100% renewable island grids are viable at community scale.

Key results: Over 90% renewable penetration sustained over multiple consecutive days; diesel fuel use cut by more than 60%.

External reference: El Hierro Gorona del Viento — IRENA Case Study

Case Study 2 — Flinders Island, Australia

Flinders Island in Tasmania installed a solar-plus-BESS system that has cut diesel dependency sharply. The Island Grid BESS runs in grid-forming mode. Diesel generators have moved to demand-start backup. The Horizon Power-managed grid shows that grid-forming BESS can serve as the primary voltage and frequency source for a real remote community.

Key results: Diesel use down roughly 55%; Island Grid BESS availability above 99.5% since commissioning.

External reference: ARENA Australia — Grid-Forming Battery Revolution

Case Study 3 — Hospital Microgrid, Lombok (Indonesia)

Research published in Energy and Buildings (2025) modelled a PV-BESS microgrid for a hospital on Lombok Island. The study tested a 3-day outage scenario. A correctly sized Island Grid BESS — supplying 7 MWh per day of critical load — maintained 100% hospital reliability with no diesel. The findings highlight the life-critical value of Island Grid BESS beyond day-to-day economics.

Case Study 4 — Mining Operation, Western Australia

A remote mining site replaced three diesel gensets with a solar Island Grid BESS. The system uses VSG grid-forming control. Droop settings were calibrated to match the frequency response that the mining equipment’s protection relays were designed around. In year one, diesel use fell by 78%. By year two, after a solar expansion, diesel was phased out entirely.

11 — Island Grid BESS Sizing Reference Table

Use the table below as a starting point for project scoping. All figures assume LFP chemistry, 90% depth of discharge, 10% spinning reserve headroom, and a solar-plus-BESS setup with 12-hour overnight supply duration.

| Island Peak Load | Min BESS Power | Min BESS Energy | Typical Solar PV | Target Renewable % |

|---|---|---|---|---|

| 50 kW | 65 kW | 400 kWh | 80 kWp | 80% |

| 100 kW | 130 kW | 800 kWh | 150 kWp | 80% |

| 250 kW | 325 kW | 2,000 kWh | 380 kWp | 80% |

| 500 kW | 650 kW | 4,000 kWh | 750 kWp | 80% |

| 1 MW | 1.3 MW | 8 MWh | 1.5 MWp | 80% |

| 5 MW | 6.5 MW | 40 MWh | 7.5 MWp | 80% |

| 10 MW | 13 MW | 80 MWh | 15 MWp | 80% |

These are indicative scoping figures only. Final sizing must be based on measured load profiles, site-specific resource data, and full power systems modelling. Contact SunLith Energy for a project-specific Island Grid BESS analysis.

12 — Financial Case: Island Grid BESS vs Diesel Over 25 Years

The financial case for Island Grid BESS has shifted a great deal since 2022. LFP battery costs have fallen to $90–130/kWh installed in competitive markets. Meanwhile, diesel delivery costs to remote islands have risen — when you include logistics, shipping, and storage. Together, these trends make Island Grid BESS the economically dominant choice in almost every isolated grid context.

The Diesel Costs That Most Analyses Miss

Simple comparisons often undercount the true cost of diesel on island grids. A full cost assessment must include all of the following:

- Fuel logistics: Diesel price plus shipping, handling, and on-island storage

- Generator replacement: Diesel gensets need full replacement every 15,000–25,000 running hours

- Maintenance and travel: Regular servicing requires technicians to travel by air or sea to remote sites

- Environmental liability: Diesel storage creates spill risk, especially in ecologically sensitive island areas

- Carbon costs: Where carbon pricing applies, diesel grids face costs that grow each year

Why Island Grid BESS Wins on Lifetime Cost

Island Grid BESS offers several clear cost advantages over diesel. First, there is no ongoing fuel cost — solar and wind energy have zero marginal cost. Second, LFP BESS have no moving parts, so maintenance is far cheaper than for diesel generators. Third, modern LFP BESS are built for 20–25-year project life. Battery capacity augmentation at year 10–12 is the main lifecycle cost event. Finally, for islands weighing a submarine cable connection against Island Grid BESS, the battery solution is typically cheaper at scales below 10 MW peak demand.

Indicative 25-Year Cost Comparison: 500 kW Island Grid

| Cost Item | Diesel Island Grid | Solar + Island Grid BESS |

|---|---|---|

| Fuel cost per year (Year 1) | $350,000–500,000 | $0 |

| Annual maintenance | $80,000–120,000 | $15,000–25,000 |

| Capital replacement at Year 10 | $400,000–600,000 (gensets) | $150,000–250,000 (augmentation) |

| Carbon cost exposure | High and rising | None |

| 25-year NPV advantage | Baseline | $3–6 million in BESS’s favour |

These figures are indicative, based on 2026 market pricing. Site-specific financial modelling is required before any investment decision.

13 — Key Technical Challenges and Practical Solutions

Challenge 1 — Protection Coordination

Standard relay settings are built around the fault current that synchronous generators produce. Island Grid BESS inverters, however, typically produce lower fault currents — around 1.0–1.2 per-unit versus 5–10 per-unit for a generator. As a result, relay settings must be reconfigured to match the BESS fault current range.

Solution: Run a full protection coordination study before specifying relay settings. Some grid-forming BESS inverters now offer fault current up to 1.5–2.0 per-unit. That helps improve protection discrimination and simplifies the relay setup.

Challenge 2 — Large Load Steps on Small Island Grids

On a small Island Grid BESS under 500 kW, a single large motor — a pump, an air conditioner, a welding set — can represent a large share of total load. Each start is a sudden demand that the BESS must absorb without letting frequency collapse.

Solution: Specify VSG mode with tight droop settings and a low-pass filter on the load measurement. For large motors, add soft starters or variable frequency drives. These reduce inrush current sharply and make each load step manageable.

Challenge 3 — Battery Degradation in Hot Climates

Island Grid BESS sites in tropical areas face high ambient temperatures. Without good thermal management, LFP cell ageing speeds up significantly.

Solution: Use active thermal management to keep cells between 20–30°C. Do not rely on passive cooling alone in any tropical installation. Size the HVAC system for the worst-case ambient temperature — not the annual average.

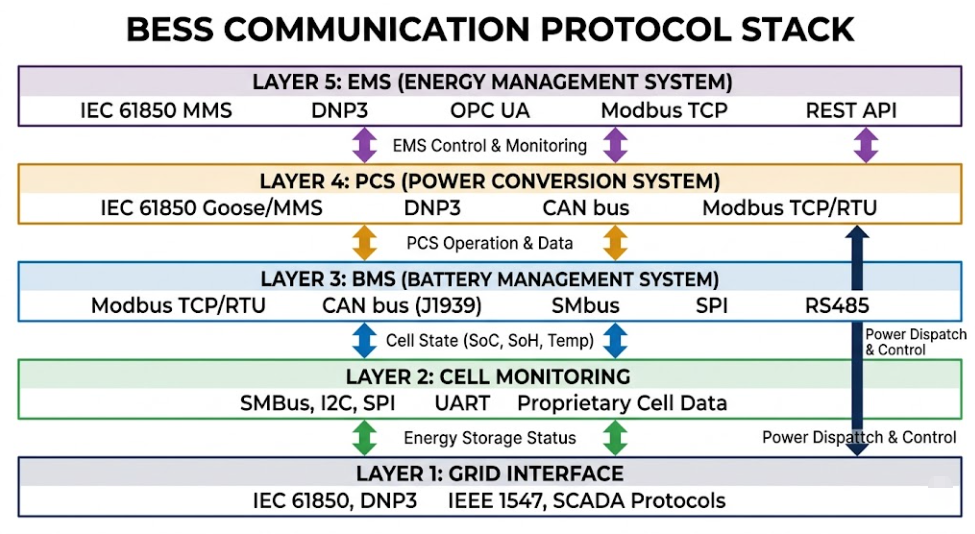

Challenge 4 — Energy Management System Latency

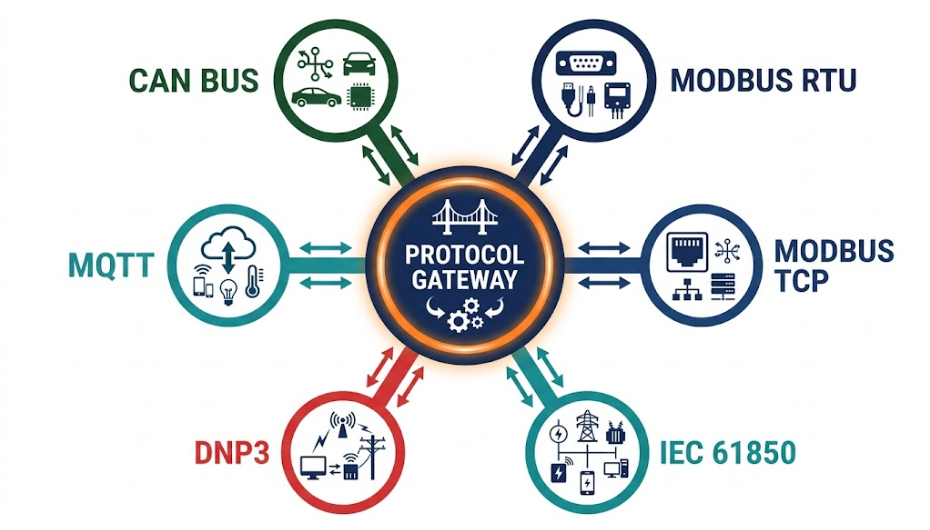

On an island grid, the delay between a measured grid event and the BESS response directly affects frequency stability. Grid-connected BESS systems can tolerate 500–1,000 ms EMS response times. Island Grid BESS, however, needs inverter-level response within 20–50 ms. The EMS should only handle the slower strategic scheduling.

Solution: Specify inverter-integrated droop and VSG control that runs autonomously at the hardware level. The EMS then updates set-points on a scheduling cycle measured in minutes — not milliseconds.

14 — Frequently Asked Questions

What is Island Grid BESS and how does it differ from standard BESS?

Island Grid BESS must act as the sole voltage and frequency reference on an isolated network. There is no utility grid as backup. This requires grid-forming inverter control, black start capability, and continuous power balance management. In contrast, a standard grid-connected BESS needs none of these. The engineering scope is therefore much broader. For the full inverter control comparison, see our guide on grid-forming vs grid-following BESS.

Can a grid-following BESS be used on an island grid?

Not as the sole power source. A grid-following inverter needs an existing voltage reference to operate. On an island grid with no diesel generator running, that reference does not exist. However, a grid-following BESS can participate in an island grid if a diesel generator or grid-forming BESS is already providing the reference voltage. For the full technical details, see our guide to grid-following BESS.

How many hours of storage does an Island Grid BESS need?

The minimum is typically 4 hours for a solar-heavy island with a strong, consistent solar resource. However, 8–16 hours is more common for reliable overnight supply. Furthermore, systems in high-latitude or wind-heavy locations may need 24–72 hours to cover extended low-generation periods. Sizing must always be based on site-specific load profiles and measured generation data.

What battery chemistry is best for Island Grid BESS?

LFP (Lithium Iron Phosphate) is the right choice for almost all Island Grid BESS projects in 2026. Its thermal stability, 4,000–8,000 cycle life, and safety profile make it clearly better than NMC for remote island sites where fire response and maintenance access are limited.

How does Island Grid BESS handle a complete power failure?

Through black start. A correctly specified grid-forming Island Grid BESS can energise the island AC network from a fully dead state using stored battery energy alone. The inverter creates a stable AC voltage and then reconnects loads in a controlled sequence — starting with critical loads first. Diesel generators, if retained, can then sync to the re-established BESS reference.

Can renewable energy cover 100% of an island’s power needs with Island Grid BESS?

Yes — and real-world projects already prove it. Island grids are operating at 90–100% renewable penetration today. However, the remaining challenge is cost. Storing enough energy to cover extended zero-generation periods requires a large BESS. For most islands, 80–90% renewable penetration is the economically optimal starting point. Full diesel elimination follows as storage costs continue to fall.

What does an Island Grid BESS project typically cost?

Turnkey 4-hour LFP Island Grid BESS systems were priced at about $180–260/kWh installed in European and Pacific markets in 2026. Therefore, a 500 kW / 4,000 kWh system represents a BESS capital cost of $720,000–$1,040,000, before solar, civil works, and EMS. In high diesel-cost island markets, payback typically falls within 5–8 years.

15 — Related Articles on SunLith Energy

The following SunLith Energy guides provide the deeper technical detail that supports Island Grid BESS design and procurement:

- Grid-Forming vs Grid-Following BESS: What Is the Difference? — The essential inverter control comparison for any island grid project.

- Grid-Forming BESS Technology: Complete Guide — Full technical deep dive on VSG, droop, and PSC control for island grid applications.

- Grid-Following BESS: Comprehensive Technical Guide — The definitive reference for grid-following architecture and its island grid limitations.

- C&I BESS with Renewable Energy: Solar Self-Consumption and Beyond — Relevant for C&I island grid operators optimising behind-the-meter renewable integration.

- BESS Peak Shaving and Demand Charge Reduction — Demand management strategies applicable to island grids with commercial and industrial loads.

External References

- IRENA — Renewable Energy for Islands — The leading global body on island renewable energy transition and Island Grid BESS deployment.

- ARENA Australia — Grid-Forming Battery Revolution — Australia’s experience with grid-forming BESS at utility and island grid scale.

- NREL — Grid-Scale Battery Storage: FAQs — Foundational reference for BESS sizing methodology applicable to island grid projects.

- Nature Scientific Reports — Optimal Sizing of BESS in Islanded Microgrids (2025) — Peer-reviewed research on frequency droop sizing for Island Grid BESS.

- IEA — Batteries and Secure Energy Transitions — Global battery storage market data and outlook for 2025–2030.

SunLith Energy provides technical guidance, project development support, and commercial BESS solutions for island grid, microgrid, and utility-scale energy storage projects. Contact our engineering team for project-specific Island Grid BESS sizing and design support.