Island Grid BESS: Full Engineering Guide 2026 (Design & Sizing)

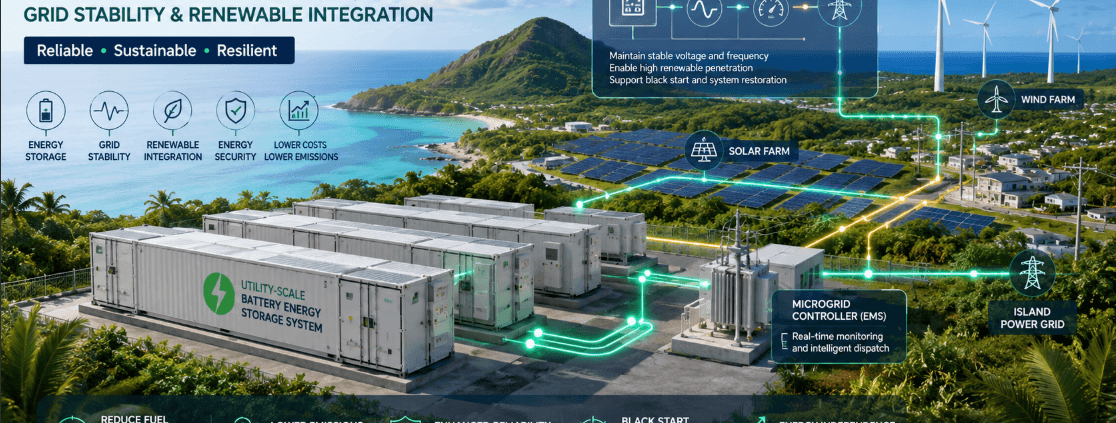

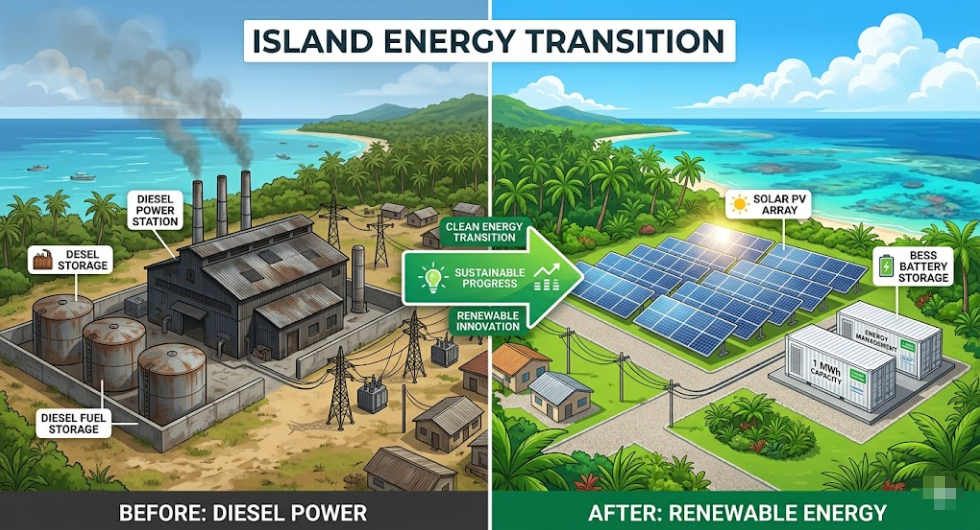

Deploying an Island Grid BESS is the definitive technology fixing one of the most overlooked power problems in the world. More than 10,000 inhabited islands still run on diesel generators. Add remote mining camps, offshore platforms, and rural areas with no grid access — and the scale of the challenge becomes clear.

All of these locations share the same problem. They need a stable, reliable grid, but they have no utility to rely on. For decades, diesel was the only answer. Today, in 2026, Island Grid BESS is replacing diesel as the backbone technology. It does so faster, more reliably, and at a lower lifetime cost.

This guide covers everything you need. It explains how Island Grid BESS works and how it differs from standard storage. It also shows you how to size a system, which control architecture to pick, and how to build a strong financial case.

📌 QUICK DEFINITION

What is Island Grid BESS?

Island Grid BESS is a Battery Energy Storage System that acts as the main voltage and frequency source on an isolated network. It has no connection to a utility grid. Unlike a grid-connected BESS that follows an existing grid signal, an Island Grid BESS creates the grid itself. It keeps power stable for all loads using stored energy, renewables, or both.

Table of Contents

- 01 — Why Island Grids Are a Different Engineering Problem

- 02 — Island Grid BESS vs Grid-Connected BESS: Core Differences

- 03 — The Four Critical Functions of Island Grid BESS

- 04 — Control Architecture: Why Island Grids Need Grid-Forming BESS

- 05 — Island Grid BESS Sizing: A Four-Step Method

- 06 — Battery Chemistry: Why LFP Dominates Island Grid BESS in 2026

- 07 — Solar-Plus-BESS Island Grid Architecture

- 08 — Wind-Plus-BESS Island Grid Architecture

- 09 — Diesel Hybrid Island Grids: The Three-Phase Transition Path

- 10 — Real-World Island Grid BESS Case Studies

- 11 — Island Grid BESS Sizing Reference Table

- 12 — Financial Case: Island Grid BESS vs Diesel Over 25 Years

- 13 — Key Technical Challenges and Practical Solutions

- 14 — Frequently Asked Questions

- What is Island Grid BESS and how does it differ from standard BESS?

- Can a grid-following BESS be used on an island grid?

- How many hours of storage does an Island Grid BESS need?

- What battery chemistry is best for Island Grid BESS?

- How does Island Grid BESS handle a complete power failure?

- Can renewable energy cover 100% of an island's power needs with Island Grid BESS?

- What does an Island Grid BESS project typically cost?

- 15 — Related Articles on SunLith Energy

- External References

01 — Why Island Grids Are a Different Engineering Problem

A standard grid-connected BESS has a utility grid behind it as backup. If renewable generation drops or demand spikes, the utility absorbs the imbalance. Frequency and voltage stay stable because thousands of generators share the load.

Island grids, however, have none of that.

No Backup, No Room for Error

On an island grid, every watt consumed must be generated or discharged locally. There is no utility to fill the gap. When a cloud shadow crosses a solar array, the BESS must respond in milliseconds. When a pump starts, the island grid must match that load instantly.

This is why Island Grid BESS is a different engineering discipline. The physics are harder. The control requirements are stricter. Also, the cost of failure is much higher — a blackout means the entire island or facility loses power.

The Good News: The Technology Has Matured Fast

Despite those challenges, Island Grid BESS technology has improved a great deal since 2022. Systems now running on remote islands in Australia, the Pacific, and Scandinavia are hitting 99.98% availability. That figure is better than the diesel generators they replaced.

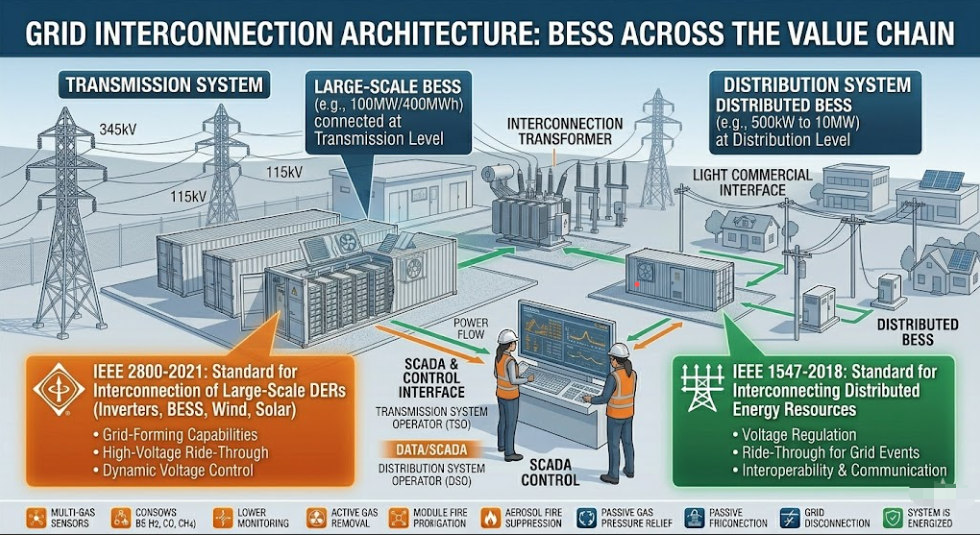

02 — Island Grid BESS vs Grid-Connected BESS: Core Differences

The difference between these two systems matters greatly for engineering and procurement. The table below shows the ten most important distinctions.

| Dimension | Grid-Connected BESS | Island Grid BESS |

|---|---|---|

| Voltage reference | Utility grid provides it | BESS creates it internally |

| Inverter control mode | Grid-following (GFL) | Grid-forming (GFM) required |

| Frequency regulation | Supports grid frequency | IS the frequency — no backup |

| Black start | Not typically required | Mandatory |

| Fault current | Utility provides it | BESS must supply it |

| Spinning reserve | Not required | Required at all times |

| Load sensitivity | Low — utility absorbs swings | High — every load step must be matched |

| Renewable integration | Flexible | Precise EMS essential |

| Comms loss tolerance | High | Low — latency affects stability |

| Design complexity | Moderate | High — full power system design needed |

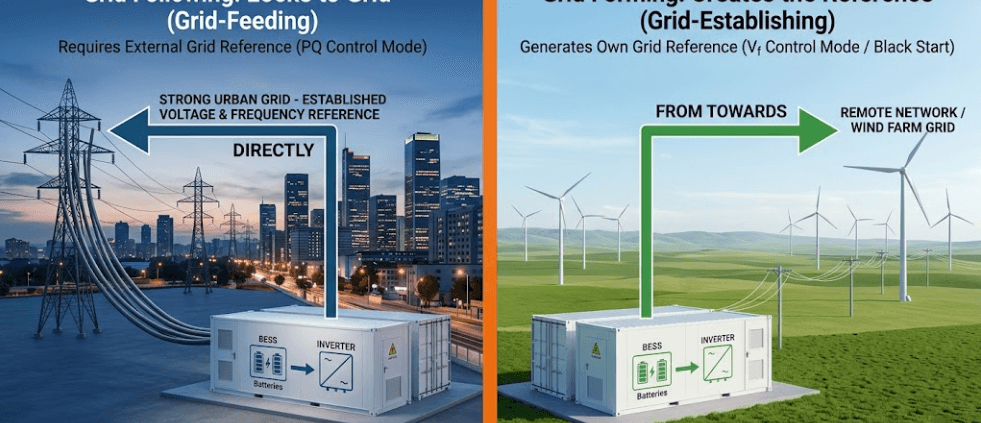

In short: a grid-connected BESS follows the grid. An Island Grid BESS is the grid.

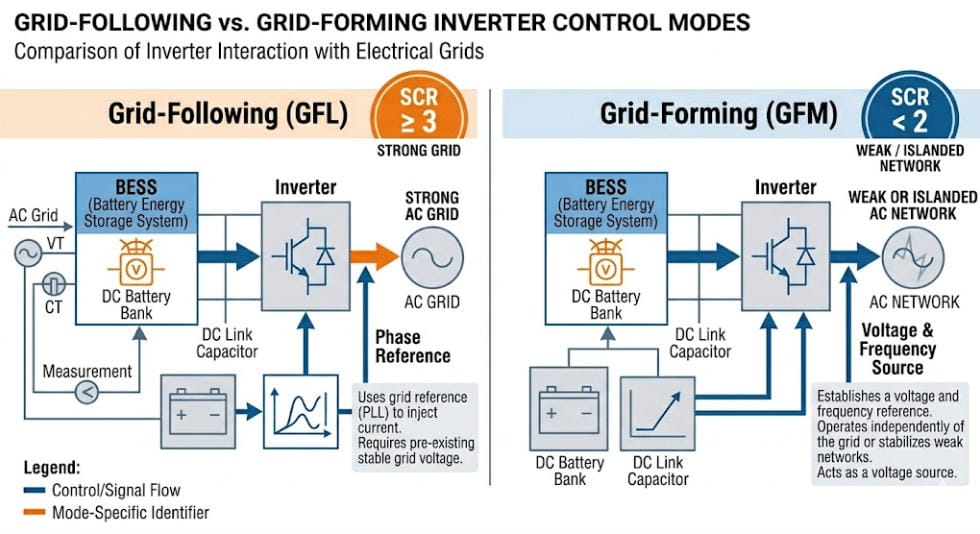

For the full breakdown of inverter control modes, see our guide to grid-forming vs grid-following BESS.

03 — The Four Critical Functions of Island Grid BESS

A well-designed Island Grid BESS must carry out four functions at the same time. These are not extras — they are core requirements.

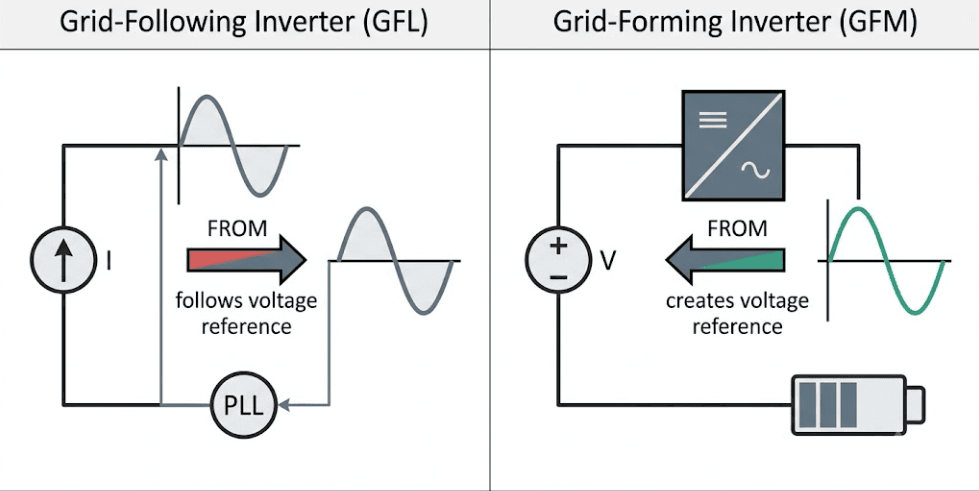

Function 1 — Voltage and Frequency Formation

The BESS inverter must create a stable AC voltage — typically 50 Hz or 60 Hz — with no external signal to copy. This is the grid-forming function. Without it, nothing on the island can run. That is why grid-forming BESS technology is the baseline spec for any Island Grid BESS project.

Function 2 — Real-Time Power Balance

At every moment, generation must equal consumption. When solar output falls due to cloud, the BESS must discharge the difference right away. When a load switches off, the BESS must absorb the surplus. Otherwise, frequency drifts and the grid becomes unstable.

Function 3 — Energy Shifting and Overnight Supply

Beyond second-by-second balancing, the BESS must also store enough energy to carry the island through long periods of zero generation. In a solar-only system, that means overnight. In a wind-heavy setup, it can mean multi-day low-wind periods. This need drives the MWh capacity spec — which is separate from the MW power spec.

Function 4 — Black Start and Grid Restoration

If the island grid goes down — due to a fault, a protection trip, or a battery shutdown — the BESS must restart the entire network with no outside help. This black start capability is a must-have for Island Grid BESS. A standard grid-following inverter cannot do it.

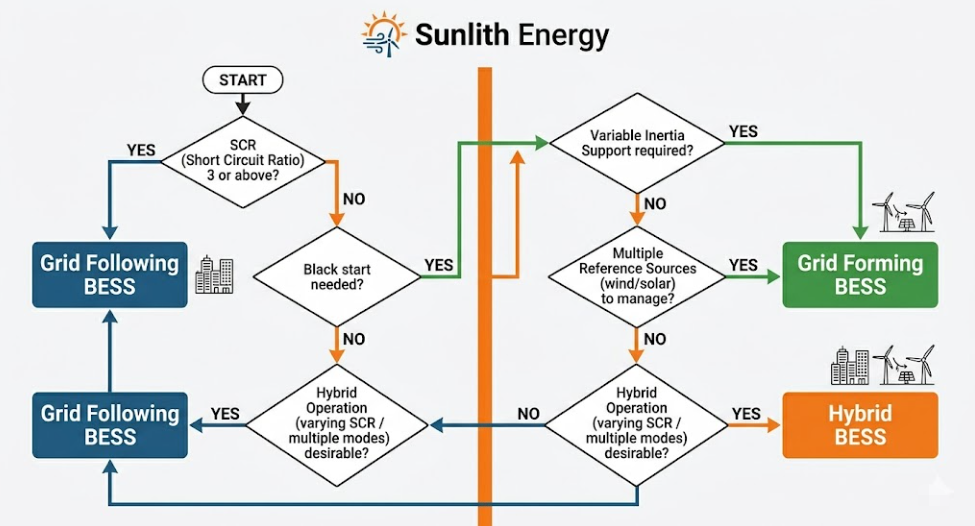

04 — Control Architecture: Why Island Grids Need Grid-Forming BESS

This is the area where most Island Grid BESS projects go wrong. The mistake often shows up late — at commissioning — and it is expensive to fix.

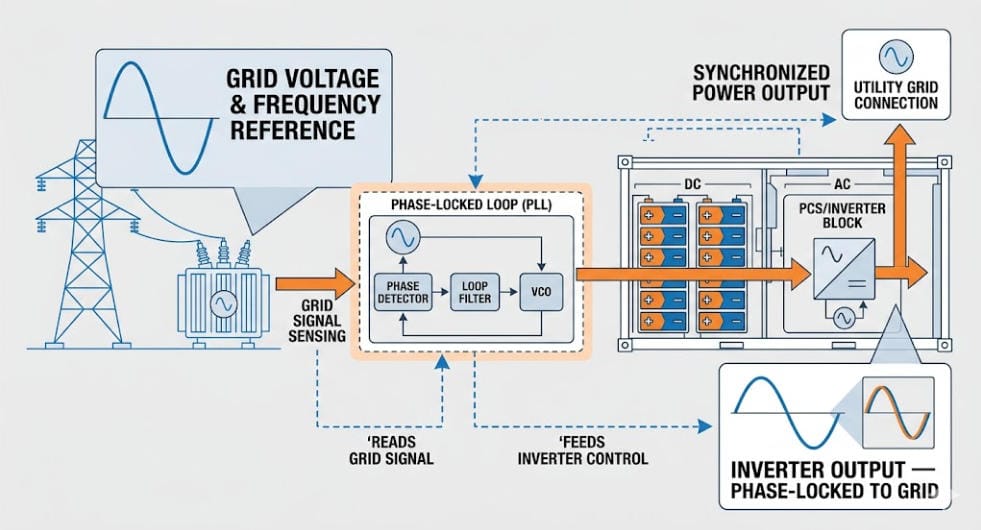

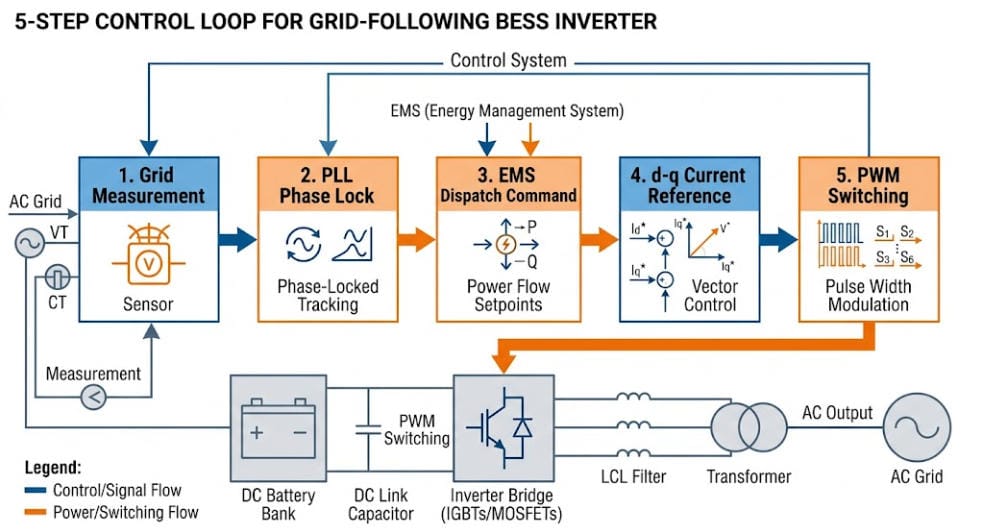

Why Grid-Following Inverters Fail Alone on an Island

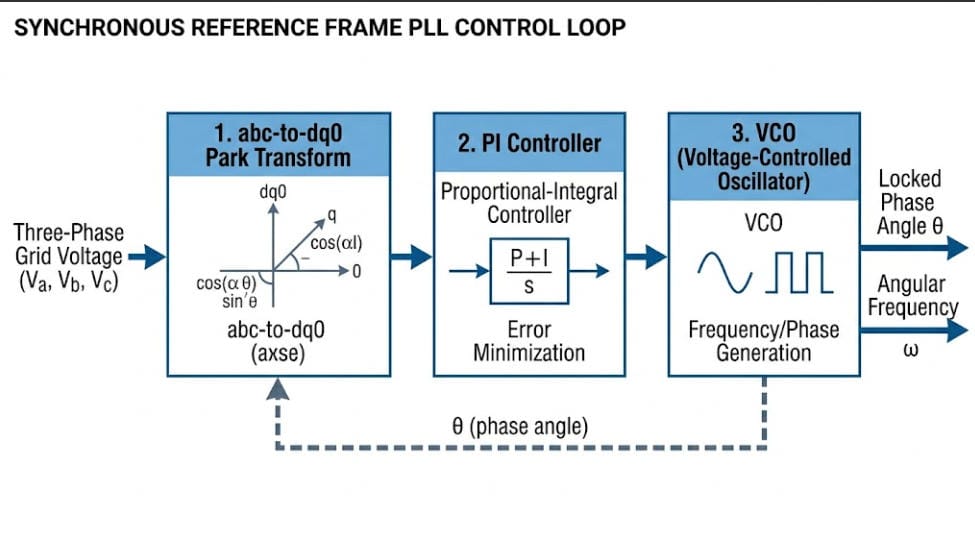

A grid-following BESS uses a Phase-Locked Loop (PLL) to lock onto an existing grid voltage signal. If there is no grid signal — which is always the case at black start — the PLL has nothing to lock to. As a result, the inverter shuts down.

For a grid-connected project, this is fine. The utility is always there as a backup. For an Island Grid BESS, however, there is no utility. The battery is the only power source. So a grid-following inverter alone is not suitable.

Grid-Forming Control: The Right Architecture for Island BESS

A grid-forming inverter creates its own internal voltage and frequency reference. Everything else on the network — loads, other inverters, generators — then syncs to that reference. Because of this, it can:

- Black-start a fully de-energised island network

- Hold stable frequency with no external signal

- Respond to load steps in milliseconds — far faster than a PLL-based inverter

- Keep running during faults that would trip a grid-following inverter

Three Control Strategies: Which One to Specify?

Choosing the right strategy depends on your island’s size, renewable mix, and load profile. Here is how the three main options compare.

Droop Control is the simplest option. It mimics a generator’s governor — it adjusts power output in line with frequency changes. Droop control works well for smaller islands with stable loads and modest renewable penetration.

Virtual Synchronous Generator (VSG) goes further. It copies the inertial response of a real synchronous generator. It reacts to both frequency deviation and Rate of Change of Frequency (ROCOF). Because of this, it works best on islands with high renewable penetration, where frequency can shift fast. Moreover, it replicates the behaviour that protection systems were designed around when diesel was the primary source.

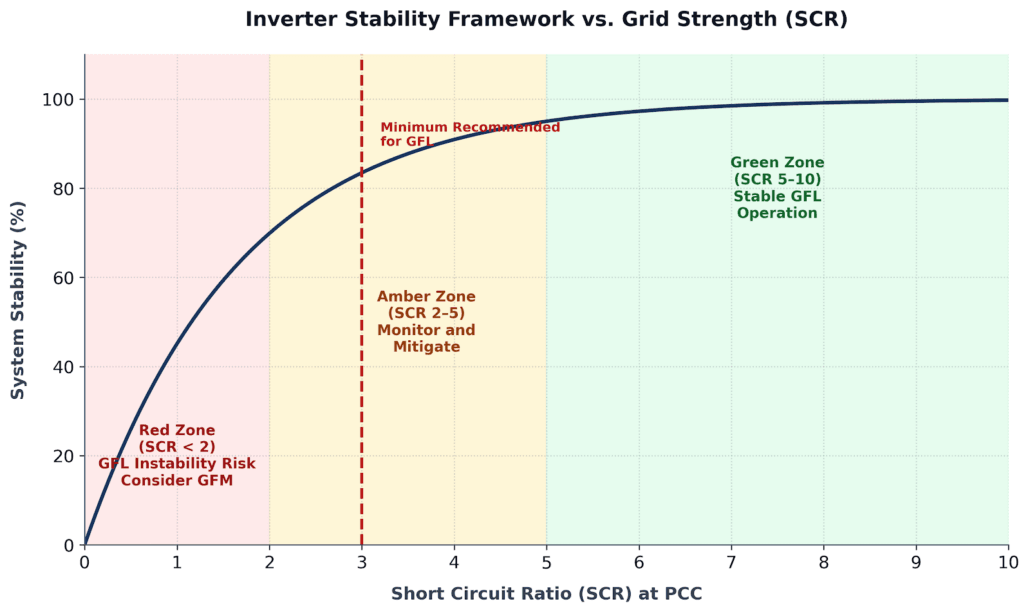

Power Synchronisation Control (PSC) is the most advanced option. Instead of using frequency as the sync signal, it uses active power. This makes it the most stable choice for very weak or very small island grids — especially where the Short Circuit Ratio (SCR) falls below 1.5.

For most Island Grid BESS projects, VSG mode is the best default. It mimics diesel generator behaviour closely, so commissioning and protection coordination are simpler.

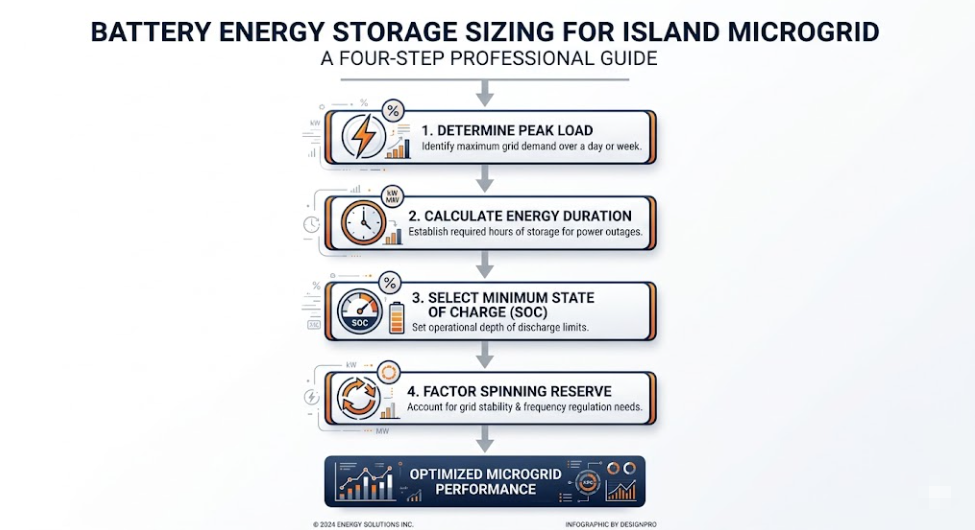

05 — Island Grid BESS Sizing: A Four-Step Method

Sizing an Island Grid BESS involves two dimensions: power capacity (MW or kW) and energy duration (MWh or kWh). Getting either one wrong causes serious operational and financial problems down the line.

Step 1 — Establish Peak Load and Load Profile

First, the BESS must meet peak demand with room to spare. A standard design rule is to size BESS power at 120–130% of peak island load. That extra headroom is your spinning reserve — the buffer that stops frequency from collapsing when demand spikes.

Example: An island with 500 kW peak demand needs a BESS rated at 600–650 kW minimum.

Step 2 — Determine Energy Duration Requirements

Next, consider how long the BESS must run on stored energy alone. For a solar-only island, that is typically 10–14 hours overnight. For a mixed solar-wind island, it can stretch to 48–72 hours during low-generation periods.

Design rule: Size the BESS to carry 100% of average island load through the worst-case zero-generation window. Then add a 20% safety margin on top.

Worked example — solar-only island, 200 kW average load, 12-hour overnight period:

- Base energy: 200 kW × 12 h = 2,400 kWh

- Plus 20% margin: 2,400 × 1.2 = 2,880 kWh usable

- Adjusted for LFP 90% DoD: 2,880 ÷ 0.90 = 3,200 kWh nameplate

Step 3 — Define State of Charge Operating Bands

Unlike a grid-connected BESS, Island Grid BESS has no utility backup if the battery runs low. SoC management must therefore be strict:

- Minimum SoC: 20% — load shedding starts below this point

- Maximum SoC: 95% — renewable generation is curtailed above this level

- Normal cycling band: 20–95%

- Emergency reserve: Keep 10% SoC set aside exclusively for black-start restoration

Step 4 — Define Spinning Reserve Allocation

Finally, set your spinning reserve. This is the share of BESS capacity that stays ready but does not discharge. It must be large enough to cover the biggest single generation loss on the island without letting frequency fall below relay trip thresholds.

Rule of thumb: Spinning reserve ≥ the rated output of the largest single renewable unit on the island.

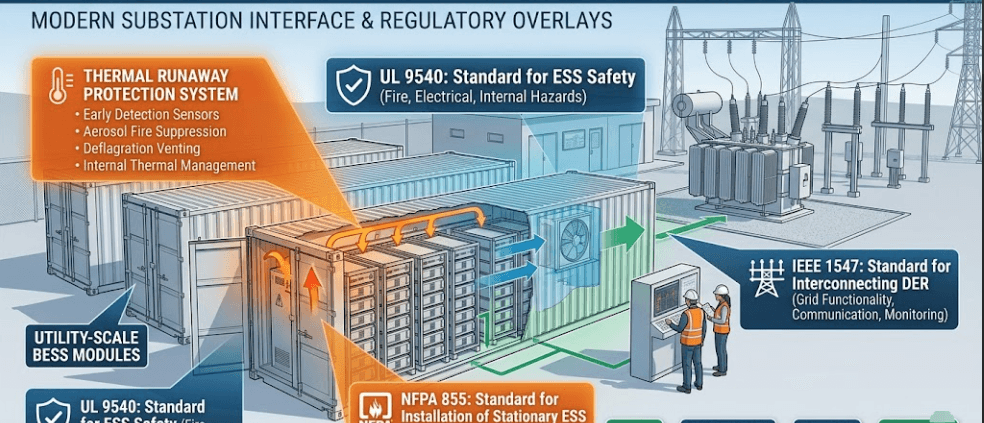

06 — Battery Chemistry: Why LFP Dominates Island Grid BESS in 2026

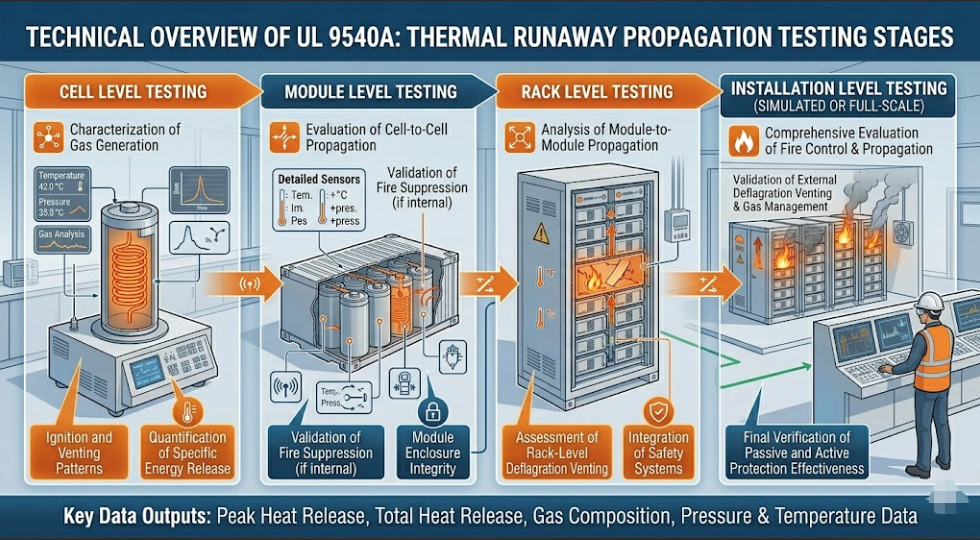

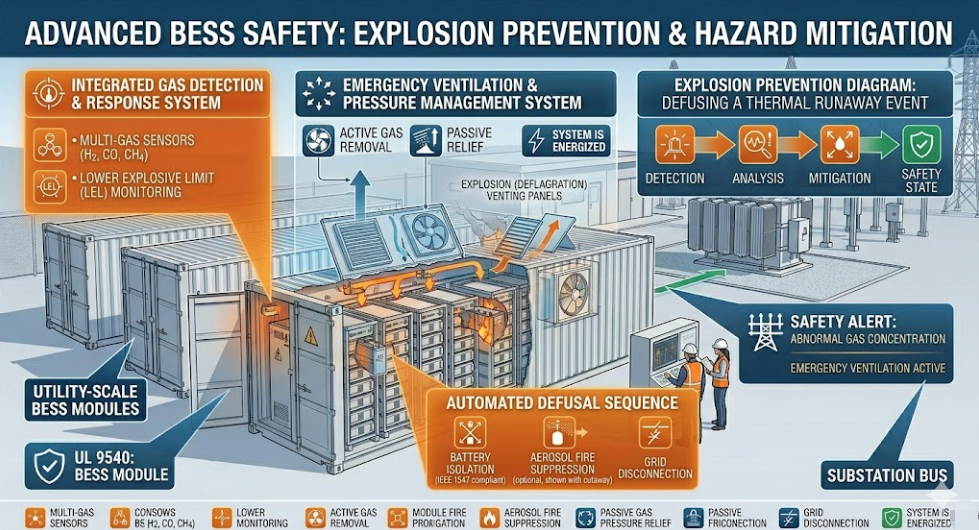

Battery chemistry for Island Grid BESS has largely settled on one answer. As of 2026, Lithium Iron Phosphate (LFP) accounts for about 95% of new island grid BESS procurement globally. That figure comes from BloombergNEF and IEA tracking data. The reasons make sense for island grid conditions specifically.

Why LFP Wins for Island Grid BESS

Thermal stability is the top reason. Many island grid sites sit in tropical climates where ambient temperatures exceed 40°C. LFP cells have a thermal runaway threshold of around 270°C. NMC cells, by contrast, run into trouble at 150–180°C. Furthermore, LFP releases far less heat if a cell does fail. In a remote location where fire response is slow, that difference is critical.

Cycle life is the second major factor. Island Grid BESS systems cycle daily, often deeply. LFP cells rated for 4,000–6,000 full cycles at 80% DoD give 10–15 years of service before capacity augmentation is needed. NMC degrades faster under the same conditions.

Cost per cycle has also shifted in LFP’s favour. LFP manufacturing capacity expanded a great deal between 2022 and 2025. As a result, prices dropped, and the per-cycle economics are now clearly better for high-cycle island grid use.

Simpler thermal management is a practical bonus. LFP is less sensitive to temperature than NMC. Therefore, the HVAC system can be simpler — an advantage on remote islands where air conditioning maintenance is hard to schedule.

The one exception: very space-constrained sites, such as offshore platforms, may justify NMC for its higher energy density per cubic metre. In all other island grid cases, however, LFP is the correct default.

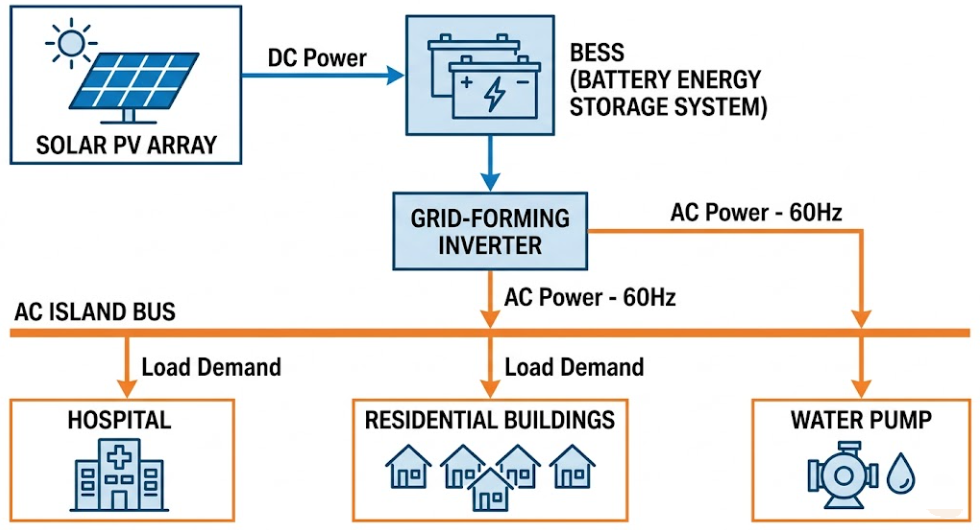

07 — Solar-Plus-BESS Island Grid Architecture

Solar-plus-BESS is the most common Island Grid BESS setup. It also has the longest track record in the field. Solar PV replaces diesel as the primary energy source. The BESS then provides grid stability and overnight energy supply.

AC-Coupled vs DC-Coupled: Which Is Right for Your Project?

DC-coupled architecture links the solar array directly to the BESS DC bus via a charge controller. The solar array and battery share the same inverter. This approach captures energy before conversion losses. It also uses solar power that would otherwise be clipped and wasted. As a result, DC-coupled systems typically cut installed cost by 5–8% and improve overall round-trip efficiency.

AC-coupled architecture connects the solar inverter to the island AC bus. The BESS connects to the same bus through a separate inverter. This setup is more flexible. Existing diesel generators integrate more easily because they simply plug into the same AC bus. For this reason, AC-coupled is usually the better choice for retrofit projects.

In summary: use DC-coupled for greenfield Island Grid BESS projects with high solar penetration. Use AC-coupled when you are transitioning away from diesel and need to keep the generators running during the process.

Renewable Penetration Targets by Project Stage

| Renewable Penetration | BESS Configuration | Diesel Role |

|---|---|---|

| Up to 50% | BESS supports frequency; diesel is primary | Diesel runs continuously |

| 50–80% | BESS is primary; diesel backs up | Diesel starts on demand |

| 80–100% | BESS is sole grid-forming source | Diesel on emergency standby |

| 100% + storage | Full diesel replacement | Diesel removed or cold standby |

At 80–100% renewable penetration, grid-forming BESS technology becomes operationally essential. At that point, the diesel generator can no longer serve as the frequency reference.

08 — Wind-Plus-BESS Island Grid Architecture

Wind-plus-BESS island grids work differently from solar setups. In many island locations, they also perform better. Wind is not limited to daylight hours. Moreover, many islands have steady trade winds that deliver higher annual capacity factors than solar PV.

Three Unique Challenges of Wind-Plus-BESS Island Grids

Rapid generation variability is the first challenge. Wind output can shift a great deal within seconds due to gusts or direction changes. Consequently, the BESS must respond faster to wind variability than it typically does to solar variability. Solar output changes more gradually, except during sudden cloud shadow events.

Frequency interaction with wind turbines is the second challenge. Modern variable-speed wind turbines use power electronics interfaces. This makes them inverter-based resources (IBR) — not rotating machines with physical inertia. Therefore, when every generation source on the island is IBR, the Island Grid BESS must provide all synthetic inertia on its own. That is a harder job than in systems where some diesel generation is still running.

Extended low-wind periods are the third challenge. Unlike solar droughts, which reset each morning, wind droughts can run for multiple days. As a result, energy duration sizing for wind-plus-BESS island grids must account for multi-day low-generation periods. This pushes BESS capacity much higher than in equivalent solar designs.

For more on how inverter-based resources interact with Island Grid BESS, see our guide on grid-forming BESS technology and the grid-forming vs grid-following BESS comparison.

09 — Diesel Hybrid Island Grids: The Three-Phase Transition Path

Most Island Grid BESS projects in 2026 are not greenfield builds. Rather, they are retrofits of existing diesel-dependent island grids. Understanding the three phases of transition is therefore essential for developers and asset owners.

Phase 1 — Diesel-Dominant with BESS Support (0–40% Renewable)

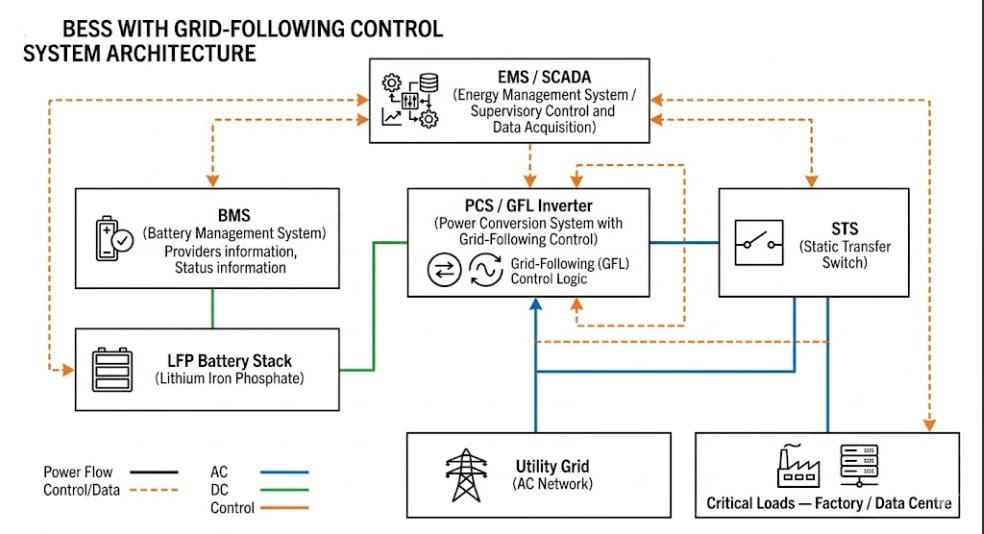

In this first phase, diesel generators still provide the voltage and frequency reference. The BESS operates in grid-following mode. It handles peak shaving, frequency regulation, and spinning reserve. As a result, diesel runtime drops, fuel costs fall, and maintenance intervals lengthen. This phase only needs a grid-following BESS. It is also the simplest and cheapest entry point.

Typical outcomes: 20–35% diesel fuel reduction; 30–40% fewer generator starts.

Phase 2 — Diesel-Backup with BESS Primary (40–80% Renewable)

In this second phase, solar or wind capacity grows. The BESS then takes over as the main generation source for larger parts of each day. Diesel generators shift from continuous running to demand-start mode. At this stage, the BESS inverter must also be able to switch into grid-forming mode whenever the diesel is offline. This requires either a grid-forming capable inverter or a static transfer switch.

Typical outcomes: 50–70% diesel fuel reduction; diesel-on to diesel-off transitions in under 10 seconds.

Phase 3 — Full Diesel Replacement (80–100% Renewable)

In this third and final phase, diesel generators move to emergency-only standby or are removed. The Island Grid BESS runs continuously as the sole grid-forming source. Before commercial operation, the system needs full grid-forming BESS specification and comprehensive black start testing.

Typical outcomes: 85–95% diesel fuel reduction; full energy independence with diesel as last-resort backup only.

10 — Real-World Island Grid BESS Case Studies

Case Study 1 — El Hierro, Canary Islands (Spain)

El Hierro has run a wind-hydro-BESS hybrid island grid since 2014. Since then, it has steadily raised renewable penetration to above 90% for extended periods. The BESS absorbs wind variability and manages the link between turbines and pumped hydro storage. Peak demand on the island is about 7 MW. In short, El Hierro shows that 100% renewable island grids are viable at community scale.

Key results: Over 90% renewable penetration sustained over multiple consecutive days; diesel fuel use cut by more than 60%.

External reference: El Hierro Gorona del Viento — IRENA Case Study

Case Study 2 — Flinders Island, Australia

Flinders Island in Tasmania installed a solar-plus-BESS system that has cut diesel dependency sharply. The Island Grid BESS runs in grid-forming mode. Diesel generators have moved to demand-start backup. The Horizon Power-managed grid shows that grid-forming BESS can serve as the primary voltage and frequency source for a real remote community.

Key results: Diesel use down roughly 55%; Island Grid BESS availability above 99.5% since commissioning.

External reference: ARENA Australia — Grid-Forming Battery Revolution

Case Study 3 — Hospital Microgrid, Lombok (Indonesia)

Research published in Energy and Buildings (2025) modelled a PV-BESS microgrid for a hospital on Lombok Island. The study tested a 3-day outage scenario. A correctly sized Island Grid BESS — supplying 7 MWh per day of critical load — maintained 100% hospital reliability with no diesel. The findings highlight the life-critical value of Island Grid BESS beyond day-to-day economics.

Case Study 4 — Mining Operation, Western Australia

A remote mining site replaced three diesel gensets with a solar Island Grid BESS. The system uses VSG grid-forming control. Droop settings were calibrated to match the frequency response that the mining equipment’s protection relays were designed around. In year one, diesel use fell by 78%. By year two, after a solar expansion, diesel was phased out entirely.

11 — Island Grid BESS Sizing Reference Table

Use the table below as a starting point for project scoping. All figures assume LFP chemistry, 90% depth of discharge, 10% spinning reserve headroom, and a solar-plus-BESS setup with 12-hour overnight supply duration.

| Island Peak Load | Min BESS Power | Min BESS Energy | Typical Solar PV | Target Renewable % |

|---|---|---|---|---|

| 50 kW | 65 kW | 400 kWh | 80 kWp | 80% |

| 100 kW | 130 kW | 800 kWh | 150 kWp | 80% |

| 250 kW | 325 kW | 2,000 kWh | 380 kWp | 80% |

| 500 kW | 650 kW | 4,000 kWh | 750 kWp | 80% |

| 1 MW | 1.3 MW | 8 MWh | 1.5 MWp | 80% |

| 5 MW | 6.5 MW | 40 MWh | 7.5 MWp | 80% |

| 10 MW | 13 MW | 80 MWh | 15 MWp | 80% |

These are indicative scoping figures only. Final sizing must be based on measured load profiles, site-specific resource data, and full power systems modelling. Contact SunLith Energy for a project-specific Island Grid BESS analysis.

12 — Financial Case: Island Grid BESS vs Diesel Over 25 Years

The financial case for Island Grid BESS has shifted a great deal since 2022. LFP battery costs have fallen to $90–130/kWh installed in competitive markets. Meanwhile, diesel delivery costs to remote islands have risen — when you include logistics, shipping, and storage. Together, these trends make Island Grid BESS the economically dominant choice in almost every isolated grid context.

The Diesel Costs That Most Analyses Miss

Simple comparisons often undercount the true cost of diesel on island grids. A full cost assessment must include all of the following:

- Fuel logistics: Diesel price plus shipping, handling, and on-island storage

- Generator replacement: Diesel gensets need full replacement every 15,000–25,000 running hours

- Maintenance and travel: Regular servicing requires technicians to travel by air or sea to remote sites

- Environmental liability: Diesel storage creates spill risk, especially in ecologically sensitive island areas

- Carbon costs: Where carbon pricing applies, diesel grids face costs that grow each year

Why Island Grid BESS Wins on Lifetime Cost

Island Grid BESS offers several clear cost advantages over diesel. First, there is no ongoing fuel cost — solar and wind energy have zero marginal cost. Second, LFP BESS have no moving parts, so maintenance is far cheaper than for diesel generators. Third, modern LFP BESS are built for 20–25-year project life. Battery capacity augmentation at year 10–12 is the main lifecycle cost event. Finally, for islands weighing a submarine cable connection against Island Grid BESS, the battery solution is typically cheaper at scales below 10 MW peak demand.

Indicative 25-Year Cost Comparison: 500 kW Island Grid

| Cost Item | Diesel Island Grid | Solar + Island Grid BESS |

|---|---|---|

| Fuel cost per year (Year 1) | $350,000–500,000 | $0 |

| Annual maintenance | $80,000–120,000 | $15,000–25,000 |

| Capital replacement at Year 10 | $400,000–600,000 (gensets) | $150,000–250,000 (augmentation) |

| Carbon cost exposure | High and rising | None |

| 25-year NPV advantage | Baseline | $3–6 million in BESS’s favour |

These figures are indicative, based on 2026 market pricing. Site-specific financial modelling is required before any investment decision.

13 — Key Technical Challenges and Practical Solutions

Challenge 1 — Protection Coordination

Standard relay settings are built around the fault current that synchronous generators produce. Island Grid BESS inverters, however, typically produce lower fault currents — around 1.0–1.2 per-unit versus 5–10 per-unit for a generator. As a result, relay settings must be reconfigured to match the BESS fault current range.

Solution: Run a full protection coordination study before specifying relay settings. Some grid-forming BESS inverters now offer fault current up to 1.5–2.0 per-unit. That helps improve protection discrimination and simplifies the relay setup.

Challenge 2 — Large Load Steps on Small Island Grids

On a small Island Grid BESS under 500 kW, a single large motor — a pump, an air conditioner, a welding set — can represent a large share of total load. Each start is a sudden demand that the BESS must absorb without letting frequency collapse.

Solution: Specify VSG mode with tight droop settings and a low-pass filter on the load measurement. For large motors, add soft starters or variable frequency drives. These reduce inrush current sharply and make each load step manageable.

Challenge 3 — Battery Degradation in Hot Climates

Island Grid BESS sites in tropical areas face high ambient temperatures. Without good thermal management, LFP cell ageing speeds up significantly.

Solution: Use active thermal management to keep cells between 20–30°C. Do not rely on passive cooling alone in any tropical installation. Size the HVAC system for the worst-case ambient temperature — not the annual average.

Challenge 4 — Energy Management System Latency

On an island grid, the delay between a measured grid event and the BESS response directly affects frequency stability. Grid-connected BESS systems can tolerate 500–1,000 ms EMS response times. Island Grid BESS, however, needs inverter-level response within 20–50 ms. The EMS should only handle the slower strategic scheduling.

Solution: Specify inverter-integrated droop and VSG control that runs autonomously at the hardware level. The EMS then updates set-points on a scheduling cycle measured in minutes — not milliseconds.

14 — Frequently Asked Questions

What is Island Grid BESS and how does it differ from standard BESS?

Island Grid BESS must act as the sole voltage and frequency reference on an isolated network. There is no utility grid as backup. This requires grid-forming inverter control, black start capability, and continuous power balance management. In contrast, a standard grid-connected BESS needs none of these. The engineering scope is therefore much broader. For the full inverter control comparison, see our guide on grid-forming vs grid-following BESS.

Can a grid-following BESS be used on an island grid?

Not as the sole power source. A grid-following inverter needs an existing voltage reference to operate. On an island grid with no diesel generator running, that reference does not exist. However, a grid-following BESS can participate in an island grid if a diesel generator or grid-forming BESS is already providing the reference voltage. For the full technical details, see our guide to grid-following BESS.

How many hours of storage does an Island Grid BESS need?

The minimum is typically 4 hours for a solar-heavy island with a strong, consistent solar resource. However, 8–16 hours is more common for reliable overnight supply. Furthermore, systems in high-latitude or wind-heavy locations may need 24–72 hours to cover extended low-generation periods. Sizing must always be based on site-specific load profiles and measured generation data.

What battery chemistry is best for Island Grid BESS?

LFP (Lithium Iron Phosphate) is the right choice for almost all Island Grid BESS projects in 2026. Its thermal stability, 4,000–8,000 cycle life, and safety profile make it clearly better than NMC for remote island sites where fire response and maintenance access are limited.

How does Island Grid BESS handle a complete power failure?

Through black start. A correctly specified grid-forming Island Grid BESS can energise the island AC network from a fully dead state using stored battery energy alone. The inverter creates a stable AC voltage and then reconnects loads in a controlled sequence — starting with critical loads first. Diesel generators, if retained, can then sync to the re-established BESS reference.

Can renewable energy cover 100% of an island’s power needs with Island Grid BESS?

Yes — and real-world projects already prove it. Island grids are operating at 90–100% renewable penetration today. However, the remaining challenge is cost. Storing enough energy to cover extended zero-generation periods requires a large BESS. For most islands, 80–90% renewable penetration is the economically optimal starting point. Full diesel elimination follows as storage costs continue to fall.

What does an Island Grid BESS project typically cost?

Turnkey 4-hour LFP Island Grid BESS systems were priced at about $180–260/kWh installed in European and Pacific markets in 2026. Therefore, a 500 kW / 4,000 kWh system represents a BESS capital cost of $720,000–$1,040,000, before solar, civil works, and EMS. In high diesel-cost island markets, payback typically falls within 5–8 years.

15 — Related Articles on SunLith Energy

The following SunLith Energy guides provide the deeper technical detail that supports Island Grid BESS design and procurement:

- Grid-Forming vs Grid-Following BESS: What Is the Difference? — The essential inverter control comparison for any island grid project.

- Grid-Forming BESS Technology: Complete Guide — Full technical deep dive on VSG, droop, and PSC control for island grid applications.

- Grid-Following BESS: Comprehensive Technical Guide — The definitive reference for grid-following architecture and its island grid limitations.

- C&I BESS with Renewable Energy: Solar Self-Consumption and Beyond — Relevant for C&I island grid operators optimising behind-the-meter renewable integration.

- BESS Peak Shaving and Demand Charge Reduction — Demand management strategies applicable to island grids with commercial and industrial loads.

External References

- IRENA — Renewable Energy for Islands — The leading global body on island renewable energy transition and Island Grid BESS deployment.

- ARENA Australia — Grid-Forming Battery Revolution — Australia’s experience with grid-forming BESS at utility and island grid scale.

- NREL — Grid-Scale Battery Storage: FAQs — Foundational reference for BESS sizing methodology applicable to island grid projects.

- Nature Scientific Reports — Optimal Sizing of BESS in Islanded Microgrids (2025) — Peer-reviewed research on frequency droop sizing for Island Grid BESS.

- IEA — Batteries and Secure Energy Transitions — Global battery storage market data and outlook for 2025–2030.

SunLith Energy provides technical guidance, project development support, and commercial BESS solutions for island grid, microgrid, and utility-scale energy storage projects. Contact our engineering team for project-specific Island Grid BESS sizing and design support.

Grid Forming vs Grid Following BESS: What Is the Difference?

Grid forming vs grid following BESS is the most important inverter control decision in battery storage today. In April 2025, Spain and Portugal lost power within minutes. The cascade knocked out supply across most of the Iberian Peninsula. Investigators found one root cause: too many grid-following inverters and not enough grid-forming ones to arrest the frequency collapse.

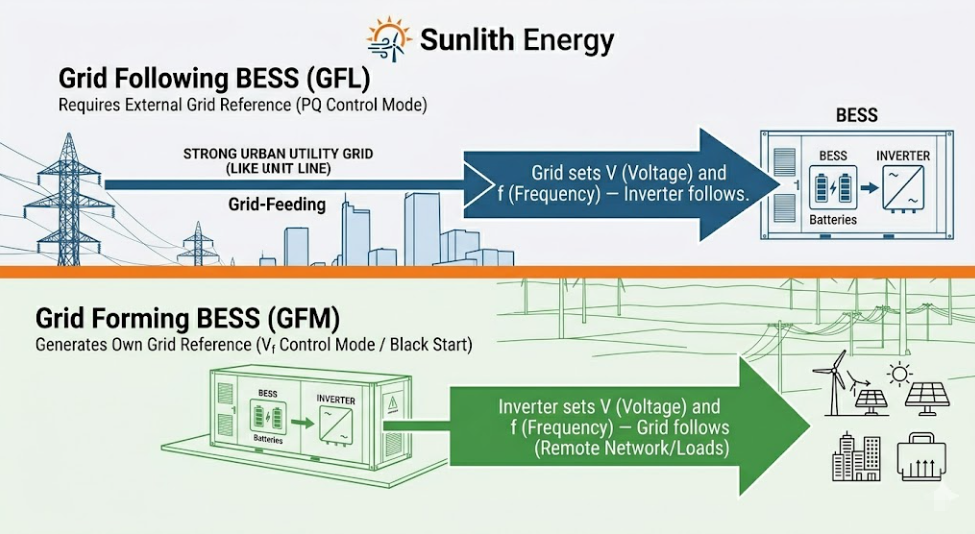

What is the difference between grid forming and grid following BESS?

The fundamental difference between grid forming and grid following BESS lies in their reference source. A grid following (GFL) BESS operates as a controlled current source. It requires an existing, stable grid voltage and frequency to lock onto via a Phase-Locked Loop (PLL). Conversely, a grid forming (GFM) BESS acts as an independent voltage source. By synthesising its own internal reference, it can operate on weak grids or completely isolated networks.

That event changed the industry conversation permanently. For developers, engineers, and asset owners, this choice now carries regulatory, financial, and grid-safety consequences — not just technical ones.

This guide covers everything you need to make the right decision. We break down how each inverter type works before comparing them head-to-head. From there, you will explore optimal applications, hybrid architectures, 2025 mandates, and real-world case studies.

Most developers already know both technologies exist. So start here, not with theory. Answer these five questions — each answer points to the right grid forming vs grid following BESS choice.

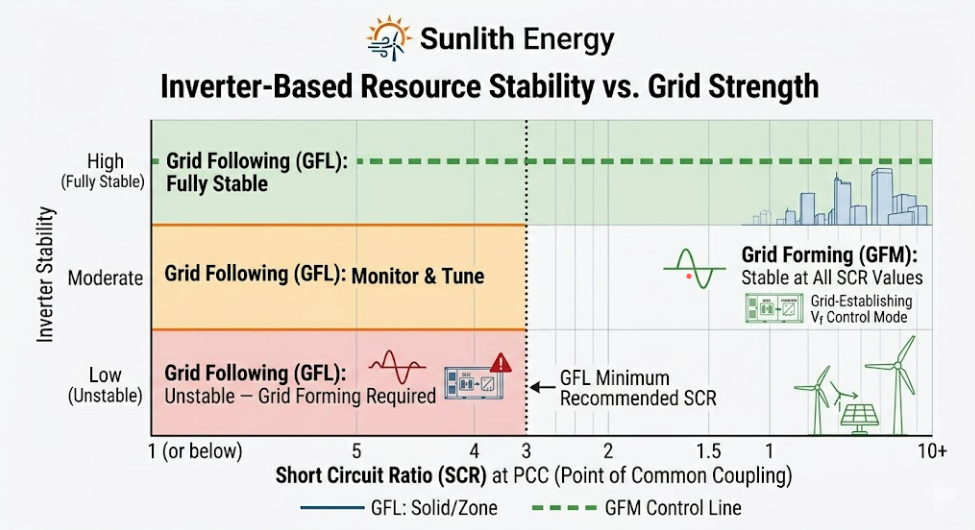

Question 1 — Short Circuit Ratio (SCR): Choosing Grid Forming vs Grid Following BESS

Question 2 — Does Your BESS Project Need Black Start or Islanding?

- No — grid following BESS is sufficient

- Occasional backup power only — grid following plus STS works well

- Sustained islanding or off-grid — grid forming BESS is required

Question 3 — What Is the Renewable Penetration at Your Grid Connection?

- Below 50% IBR penetration — grid following BESS is fine

- 50 to 70% IBR penetration — hybrid grid forming and grid following is recommended

- Above 70% IBR penetration — grid forming preferred; may be mandated

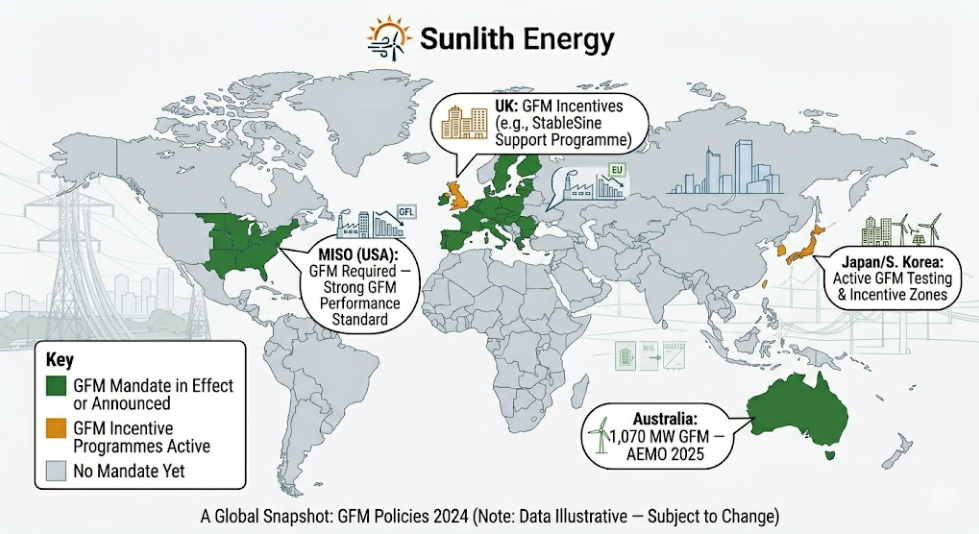

Question 4 — Is a Grid Forming BESS Mandate Active in Your Jurisdiction?

- USA (MISO territory), EU, or Australia — check mandate applicability before specifying

- Other markets — monitor; mandates are spreading globally

- No mandate yet — grid following remains fully eligible today

Question 5 — What Is Your BESS Project Timeline?

- 3 to 5 years, strong urban grid, C&I focus — grid following BESS maximises ROI today

- 10 or more years, utility scale — future-proof with grid forming or hybrid

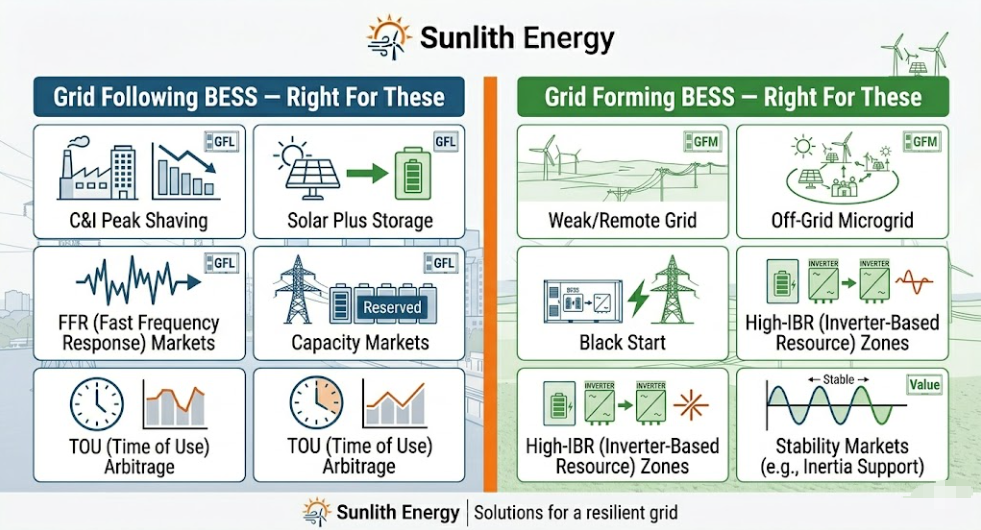

Bottom line: Strong urban grid + no islanding + C&I project = grid following BESS. Weak grid + black start + high-IBR or mandate zone = grid forming BESS. Utility-scale with a long horizon = specify grid forming firmware from Day 1.

- Grid Following BESS: The PLL Control Architecture

- Grid Following BESS: Key Strengths on Strong Grids

- Grid Following BESS: The Fundamental Limitation

- Grid Forming BESS: The Voltage-Source Architecture

- Unique Stability Capabilities of Grid Forming BESS

- Grid Forming BESS: Three Control Strategies Explained

- Grid Forming vs Grid Following BESS — 10-Dimension Head-to-Head Table

- Grid Forming vs Grid Following BESS — EPFL Campus Study Results

- Western Downs Battery: Grid Forming Upgrade Proven at 540 MW Scale

- What the Performance Data Means for Your Grid Forming vs Grid Following BESS Decision

- Why the Grid Forming BESS Cost Premium Is Shrinking in 2025

- Grid Forming vs Grid Following BESS: 10-Year Financial Summary

- Profile 1 — Grid Following BESS for C&I Peak Shaving & Demand Reduction

- Profile 2 — Grid Following BESS for Solar-Plus-Storage

- Profile 3 — Grid Following BESS for Fast Frequency Response Markets

- Profile 4 — Grid Following BESS for Capacity Market Participation

- Profile 5 — Grid Following BESS for Time-of-Use Energy Arbitrage

- Profile 1 — Grid Forming BESS for Weak Grid and Remote Industrial Sites

- Profile 2 — Grid Forming BESS for Island Microgrids and Off-Grid Systems

- Profile 3 — Grid Forming BESS for Black Start Requirements

- Profile 4 — Grid Forming BESS for High-IBR Grid Zones

- Profile 5 — Grid Forming BESS for Stability Market Revenue

- How a Hybrid Grid Forming and Grid Following BESS Architecture Works

- What the Hybrid Grid Forming and Grid Following BESS System Delivers

- Seamless Mode Switching Between Grid Forming and Grid Following BESS

- United States — MISO Grid Forming BESS Mandate (November 2024)

- Europe — EU Grid Forming BESS Rule from 2026

- Australia — Grid Forming BESS Is Now the Industry Default

- United Kingdom — Grid Forming BESS and the Stability Pathfinder

- What is the main difference between grid forming and grid following BESS?

- Can a grid following BESS be upgraded to grid forming later?

- What SCR does a grid following BESS need to work safely?

- Is grid forming BESS now required by regulation in some markets?

- Is grid forming BESS always better than grid following BESS?

- What happens if you use a grid following BESS on a weak grid?

- Related Articles on Sunlith Energy

- External References

Grid Following BESS: The PLL Control Architecture

A grid following BESS inverter acts as a controlled current source. Its job is to inject active power and reactive power into the grid at the exact voltage and frequency already running there. To do this, it relies on a Phase-Locked Loop (PLL). The PLL reads the grid voltage, frequency, and phase angle at the Point of Common Coupling thousands of times per second — then locks the inverter’s internal reference to that signal. Because of this, the inverter follows the grid rather than setting it.

Grid Following BESS: Key Strengths on Strong Grids

Grid following is the dominant technology today — about 80% of all BESS systems worldwide use this architecture. It is mature, cost-effective, and well-suited to strong-grid environments with a Short Circuit Ratio above 3. Peak demand charge reduction, time-of-use arbitrage, fast frequency response, and solar self-consumption are all well within its capabilities on a strong urban grid.

Grid Following BESS: The Fundamental Limitation

The core limit is simple: a grid following inverter needs the grid to exist. Without a stable voltage reference, the PLL has nothing to lock to. As a result, a grid following BESS cannot black-start a dead network — and it cannot sustain an islanded microgrid on its own.

For a complete technical breakdown, read our comprehensive guide to grid-following BESS.

Grid Forming BESS: The Voltage-Source Architecture

A grid forming BESS inverter acts as a controlled voltage source. Rather than reading and copying the grid signal, it synthesises its own voltage and frequency internally. Everything else on the network — other inverters, loads, generators — synchronises to the grid forming inverter. Because of this fundamental reversal, the inverter can operate with no external grid signal at all.

Unique Stability Capabilities of Grid Forming BESS

Black start, sustained islanding, synthetic inertia, and meaningful fault current contribution are all grid forming only capabilities. None are available from a standard grid following BESS. In Australia, 1,070 MW of grid forming BESS technology is already operating across ten sites as of mid-2025, according to AEMO.

Grid Forming BESS: Three Control Strategies Explained

Three main strategies power grid forming inverters commercially today. Droop control mimics a synchronous generator’s governor — the simplest and most widely deployed approach. Virtual Synchronous Generator (VSG) explicitly emulates inertial response and reacts to both frequency deviation and Rate of Change of Frequency (ROCOF). Power Synchronisation Control (PSC) is the most advanced option, using active power as the sync signal rather than frequency — the most stable choice at very low SCR values below 1.5.

Grid Forming vs Grid Following BESS — 10-Dimension Head-to-Head Table

Use the table below for engineering evaluations and procurement decisions. It covers the ten dimensions that matter most when choosing between grid forming and grid following BESS.

| Dimension | Grid Following BESS (GFL) | Grid Forming BESS (GFM) |

|---|---|---|

| Inverter behaviour | Controlled current source | Controlled voltage source |

| Synchronisation | PLL locks to grid voltage and frequency | Internal oscillator — no external reference |

| Requires grid to operate? | Yes — needs stable voltage reference | No — creates its own reference |

| Black start | None | Full black start capability |

| Sustained islanding | No | Yes — while battery has energy |

| Synthetic inertia | Limited — indirect only | Native — instantaneous ROCOF response |

| Frequency response | 200–500 ms (droop-based) | < 20 ms (voltage-source response) |

| Minimum SCR at PCC | SCR ≥ 3; unstable below 1.5 | Stable at SCR < 1.5; tested at SCR 1.0 |

| Fault current | Very limited | Significant — supports protection coordination |

| Cost vs baseline | Baseline | 0–20% premium (shrinking in 2025) |

Grid Forming vs Grid Following BESS — EPFL Campus Study Results

Real-world data from independent research confirms the performance difference between grid forming and grid following BESS. The most rigorous comparison to date used a 720 kVA / 500 kWh BESS on the EPFL campus in Switzerland. Researchers ran both control modes on identical hardware. The result was clear: grid forming outperformed grid following on every frequency regulation metric tested.

Specifically, the grid forming inverter arrested frequency deviations before they reached protection relay trip thresholds. By contrast, the grid following inverter could only respond after the deviation was already measurable. In low-inertia conditions, those extra milliseconds compound quickly and can cause cascading failures.

Source: EPFL — Performance Assessment of Grid-Forming and Grid-Following BESS on Frequency Regulation in Low-Inertia Power Grids (arXiv, 2021)

Western Downs Battery: Grid Forming Upgrade Proven at 540 MW Scale

At utility scale, the Western Downs Battery in Queensland was upgraded from grid following to grid forming in March 2025. The upgrade used firmware changes — not new hardware. After the upgrade, AEMO confirmed measurable system strength improvements in the surrounding network, with voltage recovery during Fault Ride-Through events confirmed within 300 ms under grid forming control.

Source: ARENA — Australia’s Grid-Forming Battery Revolution, November 2025

What the Performance Data Means for Your Grid Forming vs Grid Following BESS Decision

On strong grids with SCR above 5, the performance gap between grid forming and grid following BESS narrows considerably. For pure peak shaving or energy arbitrage on a strong urban grid, grid following performance is completely adequate. The extra cost of grid forming is not recovered through performance gains in that scenario.

However, in weak or high-IBR grids, grid forming outperforms grid following on every stability metric that matters — exactly the conditions the EPFL and Western Downs data reflect.

Engineering rule: The question is not which is better overall. It is which is better for this specific grid, at this specific node, for these specific services.

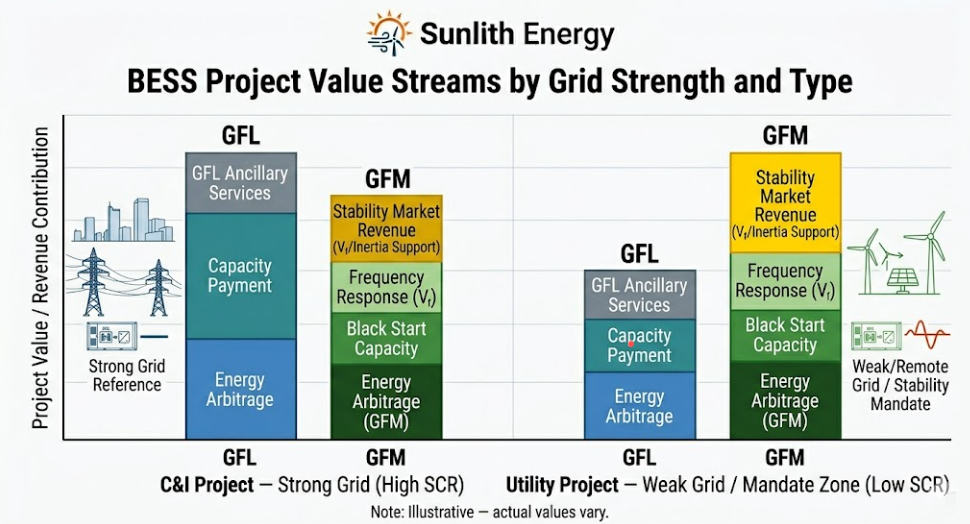

Why the Grid Forming BESS Cost Premium Is Shrinking in 2025

Three factors are compressing the cost gap between grid forming and grid following BESS. First, firmware upgrades now unlock grid forming on existing grid following hardware — exactly as the Western Downs Battery proved in March 2025. Second, manufacturing volume is driving inverter costs down broadly. Third, grid forming BESS earns revenue from stability markets that grid following cannot access.

Modo Energy’s September 2025 analysis of Australia’s NEM found no real cost difference between grid forming and grid following in that market. Meanwhile, National Grid’s Stability Pathfinder programme pays specifically for synthetic inertia and system strength — both grid forming only capabilities. Over a 10-year project life, those payments more than recover any upfront premium in mandate-affected markets.

Grid Forming vs Grid Following BESS: 10-Year Financial Summary

| Cost Factor | Grid Following BESS | Grid Forming BESS |

|---|---|---|

| Upfront capex premium | Baseline | 0–20% (market-dependent; shrinking) |

| Commissioning | Standard | Higher — grid forming tuning required |

| Stability market revenue | None | Significant in UK, Australia, Germany |

| Firmware upgrade path | Available on most modern PCS | Native from Day 1 |

| 10-year value — strong grid C&I | Higher net return | Lower unless stability revenue applies |

| 10-year value — weak grid / utility | Lower (mandate risk) | Higher in mandate-affected markets |

For detailed financial modelling, read our C&I BESS economics and ROI breakdown.

Grid following BESS is the right choice for most projects today. Below are the five scenarios where it delivers the strongest return on investment.

Profile 1 — Grid Following BESS for C&I Peak Shaving & Demand Reduction

Manufacturing facilities, data centres, and logistics hubs on strong urban grids (SCR typically 5 to 20) are ideal for grid following BESS. A well-configured Energy Management System dispatches the battery in real time to prevent demand charge spikes, cutting bills by 30 to 40%. Add a Static Transfer Switch and the same system also delivers seamless backup power.

See also: benefits of C&I BESS for manufacturing facilities.

Profile 2 — Grid Following BESS for Solar-Plus-Storage

In solar-plus-storage systems, the solar PV inverter provides the AC voltage reference. The grid following BESS inverter runs in parallel — absorbing surplus solar and wind generation and discharging when output falls. This is a well-proven configuration deployed across thousands of sites globally.

Profile 3 — Grid Following BESS for Fast Frequency Response Markets

A grid following inverter detects frequency deviation via the PLL and responds in under 200 to 500 milliseconds. That is well within the threshold for FFR products in most grid codes. As a result, grid following BESS is fully eligible and actively operating in FFR markets in Great Britain, Australia, Ireland, and the United States.

Profile 4 — Grid Following BESS for Capacity Market Participation

Grid following BESS can provide committed MW capacity through auctions in the UK, US, and Australia. Combined with energy arbitrage strategies and FFR, capacity payments create a strong multi-revenue stack without requiring grid forming capabilities.

Profile 5 — Grid Following BESS for Time-of-Use Energy Arbitrage

In liquid spot markets — ERCOT, Australia’s NEM, GB day-ahead — significant arbitrage value comes purely from charge and discharge timing. A well-configured Battery Management System and EMS handle this automatically. Grid following is the lower-cost, right-fit choice for this application.

When do you need a grid forming BESS?

A grid forming BESS is technically required or recommended over a grid following system in the following scenarios:

- Weak Grid Integration: When the Short Circuit Ratio (SCR) at the Point of Common Coupling (PCC) falls below 2.0 or 1.5.

- Island Microgrids: For remote, off-grid systems that have no utility grid to provide a voltage reference.

- Black Start Capability: When the battery system must independently energise a completely dead network.

- High Renewable Penetration: In grid zones where inverter-based resource (IBR) penetration exceeds 60% to 70%.

- Stability Market Revenue: To participate in specialised grid services like synthetic inertia and system strength contracts.

Grid forming BESS is not optional in these scenarios. In each case it is technically required or the only viable choice. Here is the detail behind each one.

Profile 1 — Grid Forming BESS for Weak Grid and Remote Industrial Sites

Grid following inverters typically become unstable when the SCR at the PCC falls below 2. In fact, dropping below SCR 1.5 risks triggering sub-synchronous oscillations if multiple grid following units run in parallel — a real engineering risk at remote mining operations, oil and gas facilities, and industrial sites on long radial feeders. For a full breakdown of why this happens, read our comprehensive guide to grid-following BESS stability.

Profile 2 — Grid Forming BESS for Island Microgrids and Off-Grid Systems

An islanded microgrid has no utility grid to provide a voltage reference — so a grid following inverter cannot operate on its own there. The grid forming BESS becomes the grid itself. It creates and holds the voltage and frequency reference that all other devices synchronise to.

Profile 3 — Grid Forming BESS for Black Start Requirements

A grid following inverter cannot energise a dead network. A grid forming inverter can. For any project where black start is a design requirement — contractual, regulatory, or operational — grid forming is the only technology that delivers this capability. There is no workaround or hybrid substitute for this specific requirement.

Profile 4 — Grid Forming BESS for High-IBR Grid Zones

As renewable penetration rises above 60 to 70%, grid following inverters in aggregate no longer have a stable signal to lock to without grid forming support. The April 2025 Iberian blackout was a direct consequence of this imbalance. Grid forming BESS, combined with a well-specified Power Conversion System, is the primary technical response.

Profile 5 — Grid Forming BESS for Stability Market Revenue

Grid forming inverters are the only technology eligible for stability market contracts — synthetic inertia in the UK Stability Pathfinder, System Strength services in Australia’s NEM, and fast FCAS premiums. These revenue streams are grid forming only. If your business model includes stability market products, grid forming is not an optional upgrade. It is the core product.

How a Hybrid Grid Forming and Grid Following BESS Architecture Works

The choice between grid forming vs grid following BESS is increasingly not a binary one. Modern inverter platforms support both control modes in the same hardware, with automatic switching between them.

In a typical hybrid design, 20 to 30% of the BESS units operate in grid forming mode. These units establish and hold the voltage and frequency reference for the whole site. The remaining units run in grid following mode against that reference — maximising total output at lower average cost than an all-grid forming fleet.

When the utility grid is strong, the grid forming units benefit from additional system strength. Should the grid weaken or disconnect, those units hold the microgrid reference autonomously. The grid following units simply continue to follow that reference, unaware that the utility has gone.

What the Hybrid Grid Forming and Grid Following BESS System Delivers

- Lower average cost than specifying all units in grid forming mode

- Full black start capability from the grid forming anchor units

- Seamless islanding with no manual intervention needed

- Stable operation at low SCR where an all-GFL system would oscillate

- Future-proofing — grid forming firmware is already on the hardware, ready when mandates arrive

Seamless Mode Switching Between Grid Forming and Grid Following BESS

Hitachi Energy’s patent filings (WO2024193866A1 and WO2024193867A1) describe supervisory control that switches individual inverter units between VSG (grid forming) and PLL (grid following) modes automatically — based on real-time voltage thresholds — without interrupting power delivery. This is production firmware, not experimental technology.

Sunlith Energy recommendation: For any new BESS project above 5 MW, specify PCS hardware with grid forming firmware capability regardless of Day 1 operating mode. The option value vastly exceeds its marginal cost.

Regulatory requirements for grid forming vs grid following BESS changed substantially in 2024 and 2025. Here is the current status across four key markets.

United States — MISO Grid Forming BESS Mandate (November 2024)

MISO finalised grid forming BESS performance requirements in November 2024. New stand-alone BESS systems seeking interconnection in MISO territory must demonstrate synthetic inertia emulation, fast frequency response, and minimum short-circuit current contribution. Grid following only systems do not meet these requirements.

Source: MISO GFM BESS Performance Requirements Whitepaper, July 2024

Europe — EU Grid Forming BESS Rule from 2026

In November 2025, ENTSO-E and key national regulators announced that all new storage projects above 1 MW must carry grid forming capability from 2026. Germany’s Bundesnetzagentur, France’s RTE, and Spain’s REE all signalled fast-track implementation timelines following the April 2025 Iberian blackout.

Source: ESS News — Europe Moves to Mandate Grid-Forming for New Storage Over 1 MW, November 2025

Australia — Grid Forming BESS Is Now the Industry Default

Australia has no formal mandate, but AEMO’s market design has made grid forming BESS the standard for new large-scale projects. Over 1,070 MW is already operating across ten sites. Modo Energy confirms that Australian developers now treat grid forming as a standard specification rather than an optional upgrade.

Source: ARENA — Australia’s Grid-Forming Battery Revolution, November 2025

United Kingdom — Grid Forming BESS and the Stability Pathfinder

National Grid ESO’s Stability Pathfinder issues multi-year contracts for synthetic inertia and system strength — both grid forming only capabilities. Grid code updates under Engineering Recommendation G99 are underway to formally require grid forming performance specifications.

See also: UL 9540 and IEC certification standards for BESS.

At Sunlith Energy, the grid forming vs grid following BESS decision is an engineering analysis on every project — never a default. Our four-step process ensures every system is specified correctly.

- SCR Analysis — We measure or obtain the SCR at the Point of Common Coupling before writing any specification. This single number anchors the grid forming vs grid following BESS recommendation.

- Revenue Stack Assessment — We model the full value stack — demand charge reduction, arbitrage, FFR, capacity markets, backup power, solar self-consumption, and stability market products. This determines whether grid forming’s cost premium is recovered through incremental revenue.

- Regulatory and Horizon Review — We check the applicable grid code, interconnection requirements, and announced mandates. For projects with a 10-year or longer horizon in MISO, Europe, or Australia, grid forming firmware capability is specified as standard.

- PCS Hardware Specification — We select Power Conversion System hardware from manufacturers that support both grid forming and grid following firmware. This gives the system full flexibility to adapt over its lifetime without hardware replacement.

Related reading: how BMS and EMS work together in a BESS system and our Battery Management System explainer.

What is the main difference between grid forming and grid following BESS?

Grid following BESS reads the grid’s existing voltage and frequency and injects current to match it — it follows the grid. Grid forming BESS synthesises its own voltage and frequency reference internally — it forms the grid. The key result is that grid following needs a strong external grid to operate stably, while grid forming can function with no grid signal at all.

Can a grid following BESS be upgraded to grid forming later?

Yes, in many cases. Australia’s Western Downs Battery proves this at 540 MW scale: the 2025 upgrade used firmware changes, not new hardware. However, not all inverters support grid forming control at the firmware level. When specifying new hardware, always confirm grid forming firmware availability with your PCS manufacturer.

What SCR does a grid following BESS need to work safely?

A minimum SCR of 3 at the PCC is the standard engineering threshold for grid following BESS. A formal stability study becomes mandatory once the system drops below SCR 2. If the node falls past SCR 1.5, specifying a grid forming BESS is strongly recommended. At or below SCR 1.0, a grid forming system using Power Synchronisation Control (PSC) is your only viable option.

Is grid forming BESS now required by regulation in some markets?

Yes. MISO finalised grid forming requirements for new BESS interconnection in November 2024. Europe announced the 1 MW+ grid forming rule for 2026. Australia’s AEMO has made grid forming the de facto standard for new large-scale BESS. Developers in these markets should treat grid forming firmware as a baseline specification.

Is grid forming BESS always better than grid following BESS?

No. On strong grids with SCR above 3 and adequate synchronous generation, grid following BESS performs excellently for peak shaving, arbitrage, and FFR. The additional capabilities of grid forming add no commercial value at a well-connected C&I site. Grid forming is better where it is needed; grid following is the right choice where grid strength is not a constraint.

What happens if you use a grid following BESS on a weak grid?

Below SCR 3, grid following inverters begin to show PLL instability. Below SCR 1.5, multiple units in parallel can enter sub-synchronous oscillations — a condition that can cascade into protection trips across the network. The April 2025 Iberian blackout demonstrated exactly this failure mode at grid scale.

The grid forming vs grid following BESS decision now carries regulatory deadlines, financial consequences, and grid-safety implications. After the April 2025 Iberian blackout, MISO’s November 2024 mandate, Europe’s 2026 rule, and Australia’s operational scale-up past 1,000 MW of grid forming BESS, this is not a decision any project developer can treat as an afterthought.

For most C&I projects on strong grids today, grid following BESS delivers faster payback, lower upfront capital, and all the commercial capabilities the project needs. For weak grids, remote sites, black start applications, high-IBR zones, and stability market participation, grid forming BESS is the technically correct — and increasingly regulatory-required — choice. For utility-scale projects above 5 MW with a long horizon, the hybrid architecture gives both capabilities at the lowest combined cost.

At Sunlith Energy, every project starts with an SCR analysis and a revenue stack model. The right grid forming vs grid following BESS specification follows from that analysis — not from a default catalogue choice.

Talk to the Sunlith Energy Engineering Team →

Related Articles on Sunlith Energy

- BESS Grid-Following (GFL): Complete Guide

- BESS Grid-Forming Technology: The Architecture Stabilising Tomorrow’s Grid

- The Role of Static Transfer Switch (STS) in C&I BESS

- How C&I BESS Peak Shaving Lowers Demand Charges

- How EMS Enables Advanced Grid Services Through BESS

- Power Conversion System (PCS): The Heart of a BESS

- C&I BESS Economics and ROI: Full Breakdown

- How C&I BESS Enhances Solar and Wind Power Integration

- Battery Management System (BMS) Explained

- UL 9540 and IEC Standards Compliance for BESS

- Benefits of C&I BESS for Manufacturing Facilities

- BMS vs EMS: Understanding the Control Layers in BESS

External References

- EPFL — Performance Assessment of Grid-Forming and Grid-Following BESS (arXiv, 2021)

- MISO — GFM BESS Performance Requirements Whitepaper, July 2024

- ARENA — Australia’s Grid-Forming Battery Revolution, November 2025

- Modo Energy — The Rise of Grid-Forming Batteries in the NEM, September 2025

- ESS News — Europe Moves to Mandate Grid-Forming for New Storage Over 1 MW, November 2025

- National Grid ESO — Stability Pathfinder Programme

- IEEE Standard 2800-2022 — Interconnection Requirements for IBRs

- ENTSO-E — Network Code on Requirements for Grid Connection of Generators

- NREL — Grid Integration of Battery Storage Research

- IEA — Batteries and Secure Energy Transitions Report

- BloombergNEF — Energy Storage Market Outlook

BESS Communication Protocols: The Complete 2026 Guide

What Are BESS Communication Protocols?

BESS communication protocols are the rules that let every part of a battery storage system share data.

So without them, batteries, inverters, and grid systems cannot work together.

Each device in a BESS speaks a different digital language. But a shared protocol gives them a common way to talk.

For example, the battery uses CAN Bus internally. The inverter, however, often uses Modbus. And the grid uses IEC 61850.

Choosing the right BESS communication protocols matters a lot. A bad choice leads to slow integration, poor performance, and higher costs.

Why BESS Communication Protocols Affect System Safety

Speed is critical in a BESS. A fault signal must reach the controller in milliseconds. So the protocol must be fast enough to carry it in time.

Also, the protocol must be reliable. If a message is lost, the system may not shut down safely. Therefore, engineers choose protocols based on both speed and reliability.

In addition, some protocols are secure by design. Others, however, have no built-in encryption. As a result, security must be added at the network level for older protocols.

For more background, see our guides on the Battery Management System (BMS), the Power Conversion System (PCS), and the Energy Management System (EMS).

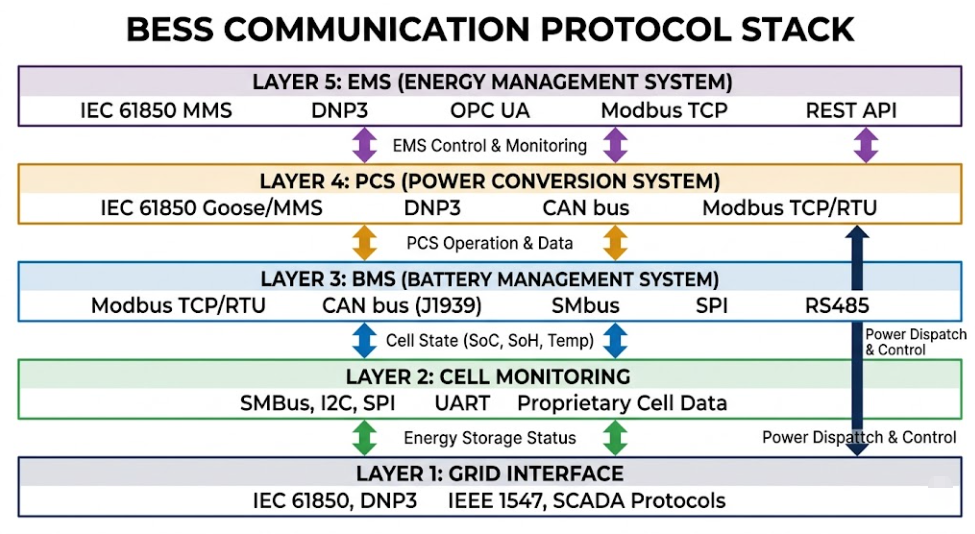

The Five Layers of BESS Communication Protocols

BESS communication protocols work across five system layers. Each layer has different speed needs and data types. So understanding these layers helps you pick the right protocol at each level.

| Layer | Component | Common Protocols |

|---|---|---|

| 1 — Cell | Battery cells, modules, BMUs | CAN Bus, SMBus |

| 2 — BMS | Battery Management System | Modbus RTU, CAN Bus, RS-485 |

| 3 — PCS | Power Conversion System / Inverter | Modbus TCP, CAN Bus, PROFINET, EtherNet/IP |

| 4 — EMS | Energy Management System | Modbus TCP, OPC UA, MQTT, IEC 60870-5-104 |

| 5 — Grid | Utility / SCADA / Cloud | IEC 61850, DNP3, IEEE 2030.5, MQTT, REST |

No single protocol covers all five layers. So most BESS projects use three or four protocols together.

As a result, a protocol gateway is almost always part of a real BESS design. We cover this in detail later.

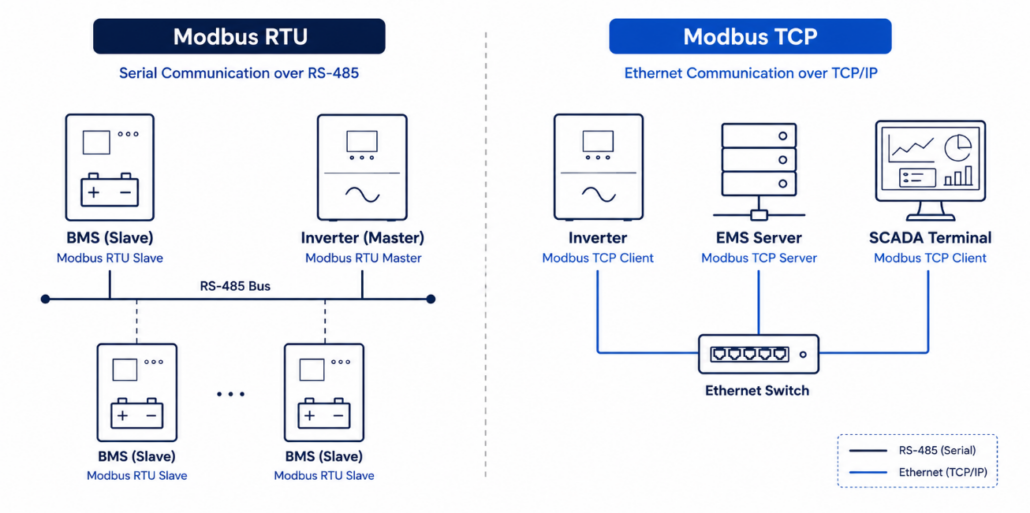

1. Modbus — The Most Widely Used BESS Communication Protocol

Modbus is the most common BESS communication protocol in the world. It was developed in 1979, but it is still used in almost every BESS project today.

So why is it so popular? Because it is simple, cheap, and works with every BESS hardware vendor.

How Modbus Works as a BESS Communication Protocol

Modbus uses a master-slave model. One master — usually the EMS — sends a request to a slave device such as the BMS. The slave then replies with its data.

There are two forms. First, Modbus RTU sends binary data over an RS-485 serial cable. Then, Modbus TCP sends the same data over a standard Ethernet network. As a result, Modbus TCP works across a local area network or even the internet.

In a BESS, Modbus TCP links the BMS to the EMS and SCADA systems. So it is how most BESS assets respond to grid operator commands.

Why Modbus Has Limits as a BESS Communication Protocol

Modbus is easy to use, but it does have gaps. For example, it has no built-in security. Also, it uses polling, which adds latency.

However, these gaps are manageable. Engineers add security at the network level. And for most BESS use cases, the polling delay is acceptable.

But Modbus should not be the only protocol on an external BESS interface. For that reason, most projects combine it with a secure protocol like OPC UA or IEEE 2030.5.

| STRENGTHS ✓ Works with every BESS hardware vendor ✓ Simple to set up and easy to debug ✓ No licence cost ✓ Runs over RS-485 serial and Ethernet TCP/IP | LIMITATIONS ✗ No built-in encryption or authentication ✗ Polling model adds latency ✗ Limited data model vs IEC 61850 ✗ Not suitable alone for utility-facing use |

Used for: BMS ↔ EMS, BMS ↔ PCS, SCADA, field instruments

See also: Battery Management System (BMS) Explained | Power Conversion System (PCS) Guide

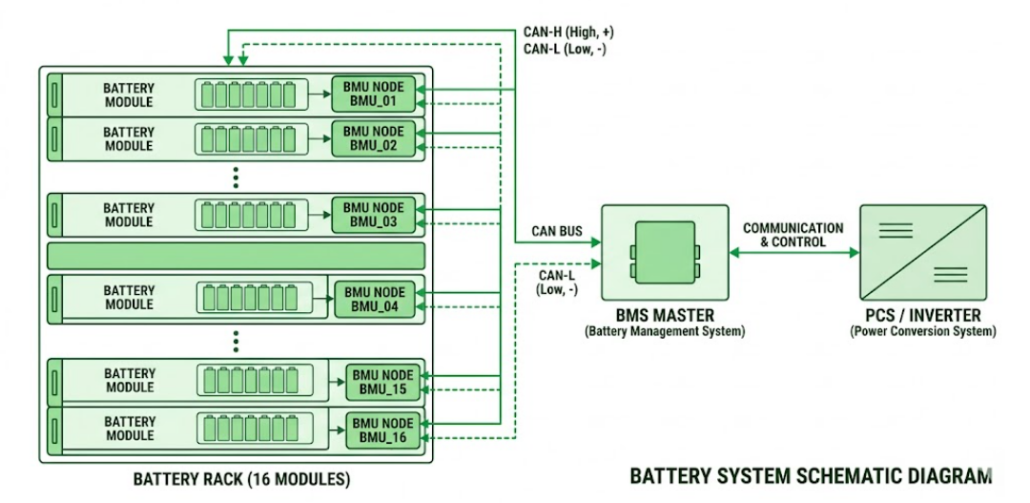

2. CAN Bus — The Internal BESS Communication Protocol

CAN Bus is the backbone of every battery rack. It was built for cars, but it also works perfectly inside BESS enclosures.

In fact, it is now found in products from BYD, CATL, Huawei, Sungrow, and Pylontech. So it has become the standard for internal BESS communication.

Why CAN Bus Suits BESS Internal Communication

CAN Bus uses a two-wire pair — CAN-H and CAN-L. This design blocks interference from the high-current switching inside a battery cabinet.

Also, CAN Bus is a multi-master system. So every node — modules, BMUs, and the BMS controller — can send data at any time. As a result, the system gets real-time updates without waiting to be polled.

Furthermore, China’s national grid standards require CAN Bus as the BMS-to-inverter link in all utility-scale BESS projects. So it is not just popular — it is often mandatory.

CAN Bus Limits in a BESS System

CAN Bus is fast, but its range is short. At 1 Mbit/s, cables can be no longer than 40 metres. Therefore, it cannot be used beyond the battery enclosure.

However, a gateway solves this. The gateway reads CAN Bus data and then sends it upstream as Modbus TCP, MQTT, or another BESS communication protocol.

| STRENGTHS ✓ Resists EMI via differential CAN-H / CAN-L signalling ✓ Error detection and arbitration built in ✓ Real-time, event-driven — no polling needed ✓ Used by all major BESS OEMs | LIMITATIONS ✗ Short cable range — max 40 m at 1 Mbit/s ✗ Cannot reach the utility or cloud layer ✗ Vendor register maps differ between brands ✗ Needs a gateway for EMS or cloud integration |

Used for: Cell ↔ BMU, BMU ↔ BMS Master, BMS ↔ PCS (close-range)

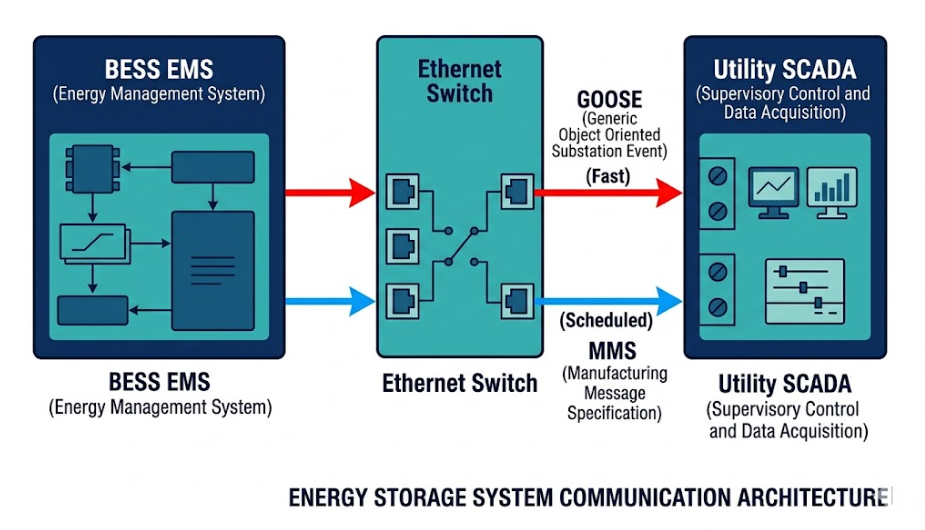

3. IEC 61850 — The Grid-Level BESS Communication Protocol

IEC 61850 is the international standard for substation automation. It is also the leading BESS communication protocol for utility grid connections, especially in Europe and Asia-Pacific.

Unlike Modbus, it defines a full information model — not just a transport layer. So any IEC 61850 device can talk to any other, no matter the brand.

What Makes IEC 61850 Different

IEC 61850 uses logical nodes and data objects to describe every piece of equipment. As a result, there is no need for custom register mapping between vendors.

Also, IEC 61850-7-420 extends the standard to cover Distributed Energy Resources, including BESS. However, this DER extension is still developing. So some projects use custom mappings alongside the standard.

GOOSE Messaging — Speed That Other BESS Communication Protocols Cannot Match

GOOSE stands for Generic Object-Oriented Substation Event. It delivers event signals in under one millisecond. Therefore, it is used for protection — where a delayed signal could mean a fault goes uncleared.

MMS, in contrast, handles scheduled data exchange between the EMS and the utility. Together, GOOSE and MMS give IEC 61850 a range that no other BESS communication protocol can match alone.

When to Specify IEC 61850 for Your BESS

Use IEC 61850 for any utility-scale BESS in Europe, the UK, or Asia-Pacific. Many regulators now require it for all new grid-connected storage assets.

Furthermore, specifying it early avoids costly retrofits. So include it in the EMS and gateway specification from day one.

| STRENGTHS ✓ True multi-vendor interoperability — no register mapping ✓ GOOSE delivers sub-millisecond protection events ✓ Rich, self-describing data model ✓ Mandated by EU, UK, and APAC utility operators | LIMITATIONS ✗ Higher engineering cost than Modbus ✗ DER model (7-420) still maturing ✗ Not all BESS OEMs support it natively ✗ Needs SCL configuration expertise |

Used for: EMS ↔ Utility SCADA, substation automation, protection, VPP

See also: How EMS Enables Advanced Grid Services | BMS vs EMS — Control Layers

4. DNP3 — The North American Utility BESS Communication Protocol

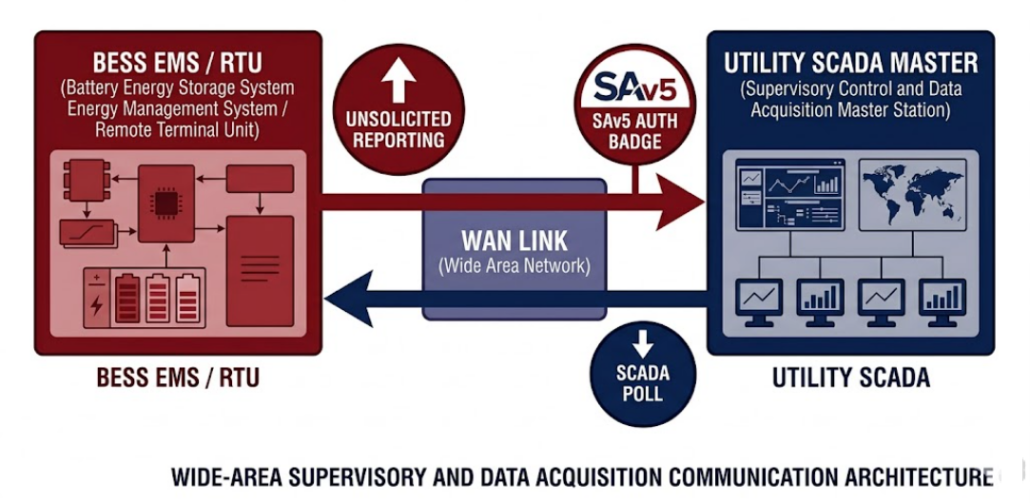

DNP3 is the standard BESS communication protocol for utility SCADA in North America. It is formally specified under IEEE Std 1815 and has been in use since 1993.

So if your BESS connects to a North American utility, you will almost certainly need DNP3.

Why DNP3 Works Well for Remote BESS Sites

DNP3 was built for tough conditions. It works over serial radio links, low-bandwidth WAN, and cellular networks. As a result, it suits remote BESS sites where network quality is poor.

Also, DNP3 supports unsolicited reporting. This means the BESS sends data only when something changes. So it uses far less bandwidth than a polling protocol like Modbus.

Adding Security to DNP3 in BESS Projects

The base DNP3 standard has no native security. However, Secure Authentication v5 (SAv5) adds a challenge-response layer. This significantly improves protection on any BESS grid link.

NERC CIP standards require strong authentication on all utility-connected BESS assets in North America. Therefore, SAv5 is now a standard requirement in most DNP3 BESS specifications.

| STRENGTHS ✓ Reliable over poor network links — serial, radio, cellular ✓ Unsolicited reporting cuts bandwidth ✓ Leading protocol for North American utility SCADA ✓ Timestamped events support accurate fault logging | LIMITATIONS ✗ Less rich data model than IEC 61850 ✗ Security needs SAv5 as a separate add-on ✗ Rarely used outside North America ✗ Not suited to cloud or IoT use |

Used for: EMS ↔ Utility SCADA, remote BESS, North American grid connections

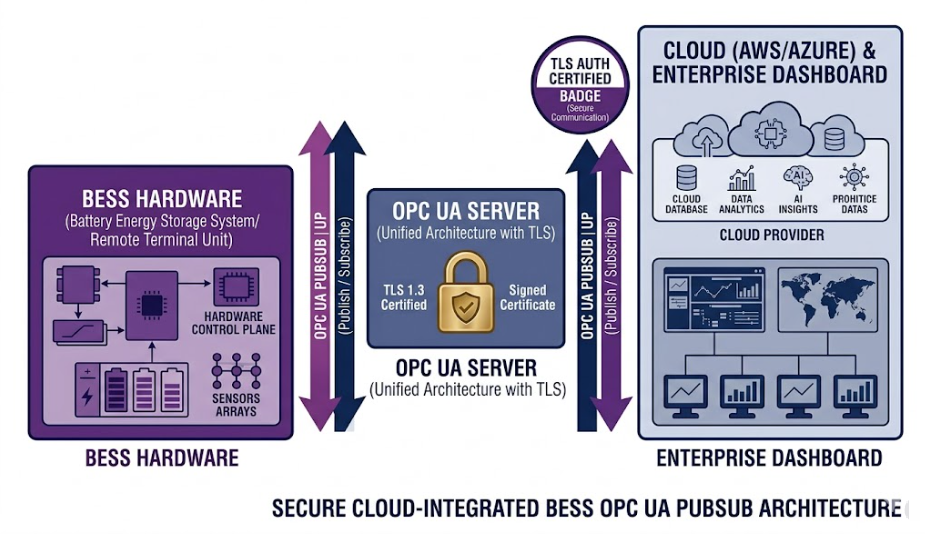

5. OPC UA — The Secure Cloud BESS Communication Protocol

OPC UA connects BESS systems to cloud platforms and enterprise software. It is specified under IEC 62541 and is widely used in industrial IoT deployments.

Unlike older protocols, it is secure by design. So it is a strong choice for any external-facing BESS interface.

How OPC UA Improves on Legacy BESS Communication Protocols

Legacy OPC was Windows-only and had no encryption. OPC UA, however, works on any platform — Linux, Windows, or embedded controllers.

Also, OPC UA uses TLS encryption by default. So every connection is secure without any extra setup. In addition, it uses a rich object model that represents a full BESS asset in a structured, self-describing format.

As a result, cloud analytics platforms can ingest BESS data without any custom engineering. So it saves time and reduces integration risk.

Combining OPC UA and IEC 61850 in Large BESS Projects

The best approach for utility-scale BESS is to use both. IEC 61850 handles real-time grid communication. OPC UA, in contrast, carries asset data to cloud analytics and digital twin platforms.

Furthermore, AWS, Azure, and Google Cloud all support OPC UA PubSub natively. Therefore, OPC UA provides a direct, secure path from the BESS site to cloud tools.

| STRENGTHS ✓ TLS encryption built in — no add-on needed ✓ Works on any platform — Linux, Windows, embedded ✓ Rich object model for complex BESS data ✓ Native support in AWS, Azure, and Google Cloud | LIMITATIONS ✗ Heavier than MQTT for simple data streams ✗ Too complex for small C&I BESS projects ✗ Higher engineering cost than Modbus ✗ Slower to implement than simpler alternatives |

Used for: EMS ↔ Cloud, asset management, digital twins, predictive maintenance

See also: How EMS Enables Advanced Grid Services

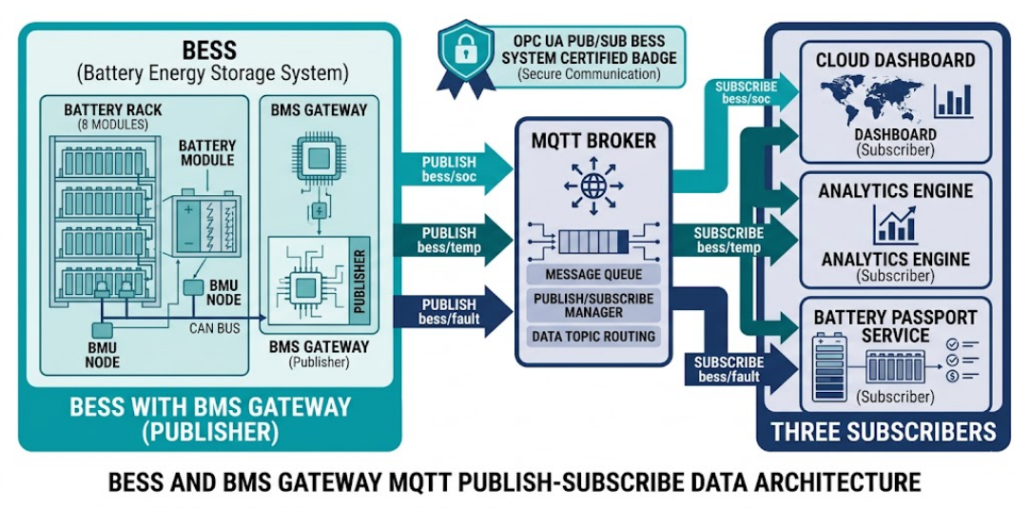

6. MQTT — The Cloud Telemetry BESS Communication Protocol

MQTT is a lightweight protocol for cloud telemetry. It is now the most popular BESS communication protocol for real-time monitoring and remote dashboards.

So if you want to stream battery data to the cloud, MQTT is the best place to start.

How MQTT Works in a BESS

MQTT uses a broker between publishers and subscribers. The BMS gateway publishes data — such as state of charge, temperature, and fault codes — to the broker.

Then cloud dashboards subscribe and receive that data in near real time. Also, the publisher-subscriber model means you can add new cloud apps without touching any hardware.

Furthermore, IEC 61850 data models can be mapped directly to MQTT topics. So a single gateway can serve both the grid and the cloud at the same time.

MQTT and the EU Battery Passport

The EU is introducing Battery Passport rules for storage assets. MQTT is well-suited to Battery Passport data exports because of its lightweight, streaming design.

As a result, MQTT is increasingly specified alongside IEC 61850 in European BESS projects. So it is becoming a standard part of the cloud layer in most modern designs.

| STRENGTHS ✓ Very lightweight — low bandwidth and CPU use ✓ Best choice for high-frequency streaming data ✓ Native support in AWS, Azure, and Google Cloud ✓ Publisher-subscriber model is flexible and scalable | LIMITATIONS ✗ No built-in BESS data model — custom topics needed ✗ Not suitable for direct control commands ✗ QoS levels must be configured carefully ✗ TLS must be switched on manually |

Used for: Cloud telemetry, remote monitoring, Battery Passport exports, IIoT analytics

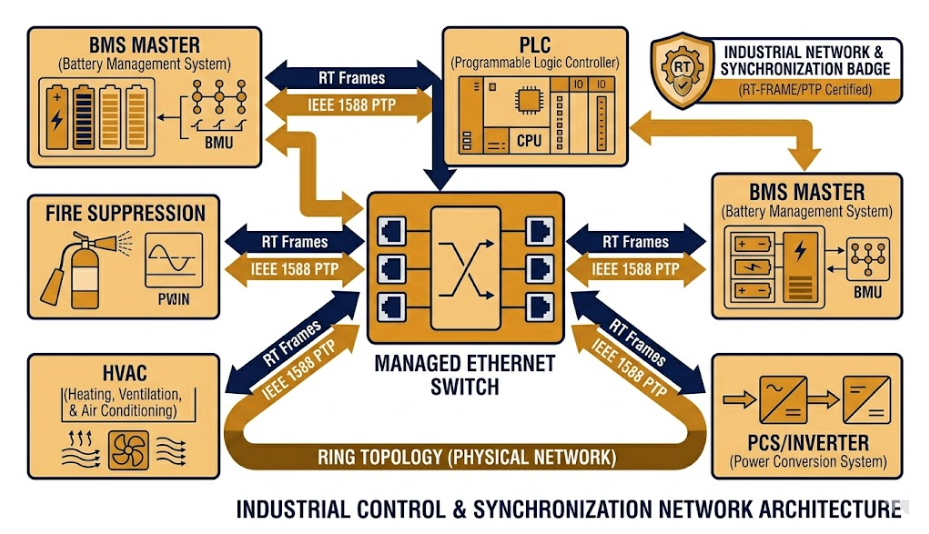

7. PROFINET and EtherNet/IP — Real-Time BESS Communication Protocols

PROFINET and EtherNet/IP are Industrial Ethernet protocols. They are used inside containerised BESS units where Modbus TCP is not fast or precise enough.

So if your BESS has a PLC controlling HVAC, fire suppression, and the inverter, these protocols are likely the right choice.

When to Use These Real-Time BESS Communication Protocols

Modbus TCP is fine for most BMS-to-EMS links. But it cannot guarantee the timing needed for fast power electronics.

PROFINET and EtherNet/IP, in contrast, are deterministic. They deliver messages within a fixed time window. As a result, charge and discharge commands arrive at exactly the right moment.

Also, both support IEEE 1588 Precision Time Protocol. This keeps all BESS components synchronised to within microseconds. Therefore, they are ideal for frequency regulation services that need sub-second response.

PROFINET vs EtherNet/IP — Which One Should You Choose?

PROFINET is the standard choice in Europe and Asia. It works best with Siemens TIA Portal and Siemens PLCs.

EtherNet/IP, however, is more common in North America. It is the native protocol for Rockwell Automation hardware. So the right choice usually depends on which PLC the project already uses.

| STRENGTHS ✓ Deterministic real-time communication ✓ Gigabit Ethernet capable — high throughput ✓ IEEE 1588 PTP for microsecond synchronisation ✓ Tight integration with Siemens (PROFINET) and Rockwell (EtherNet/IP) | LIMITATIONS ✗ Vendor lock-in — PROFINET and EtherNet/IP are not compatible ✗ Higher infrastructure cost than Modbus TCP✗ Not used for utility or cloud communication ✗ Needs managed switches with QoS and VLAN support |

Used for: BMS ↔ PCS sync, containerised BESS with PLC, auxiliary system automation

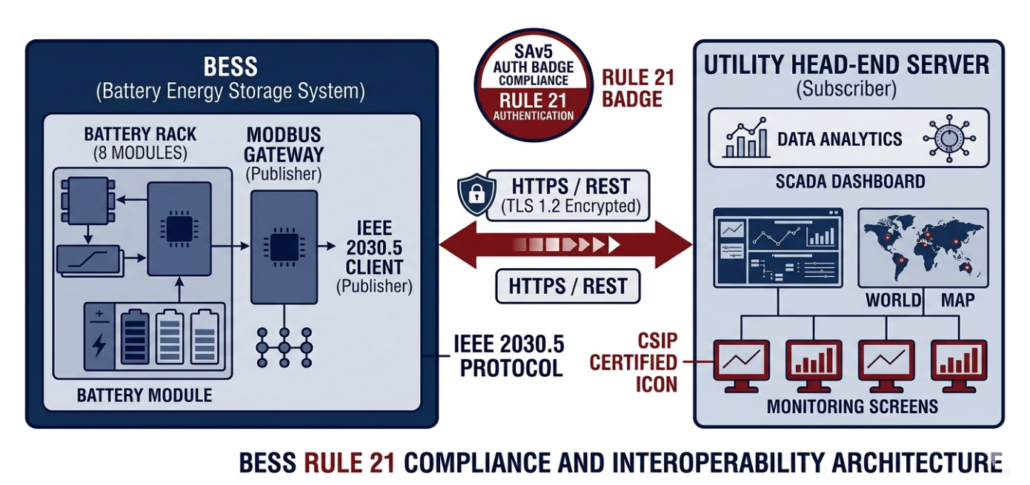

8. IEEE 2030.5 — The Compliance BESS Communication Protocol

IEEE 2030.5 is a secure, RESTful protocol for connecting BESS to utility systems. It is mandatory under California Rule 21 for all grid-connected BESS in California.

So if your project is in California — or a state adopting similar rules — you will need this protocol.

Why IEEE 2030.5 Is the Most Secure BESS Communication Protocol

Unlike Modbus or DNP3, IEEE 2030.5 requires TLS 1.2 on every connection. There is no optional configuration — it is always on.

Also, it uses standard HTTPS calls. So it fits naturally into modern IT networks. As a result, integration with utility head-end systems is simpler than with legacy serial protocols.

Using IEEE 2030.5 Without Replacing Your BESS Hardware

Most existing BESS hardware does not natively support IEEE 2030.5. However, a protocol gateway solves this easily.

The gateway translates from SunSpec Modbus or DNP3 on the device side to IEEE 2030.5 on the utility side. So operators can achieve full Rule 21 compliance without any new field hardware.

In addition, more US states and international regulators are expected to adopt similar DER rules by 2030. Therefore, specifying IEEE 2030.5 gateway support today future-proofs the asset.

| STRENGTHS ✓ TLS 1.2 mandatory — security built in ✓ RESTful HTTPS fits modern networks ✓ California Rule 21 and CSIP compliant ✓ Works via gateway — no hardware replacement needed | LIMITATIONS ✗ Primarily a North American standard ✗ REST polling too slow for fast control loops ✗ Needs specialist Rule 21 / CSIP knowledge ✗ Smaller vendor ecosystem than DNP3 or Modbus |

Used for: BESS DER interconnection, California Rule 21, utility scheduling and monitoring

All BESS Communication Protocols Compared

The table below compares all eight BESS communication protocols side by side. Use it to quickly find the right protocol for each layer of your system.

| Protocol | Layer | Real-Time | Security | Utility | Cloud/IoT |

|---|---|---|---|---|---|

| Modbus RTU/TCP | BMS ↔ EMS/PCS | Polling | None | Via SCADA | No |

| CAN Bus | Cell ↔ BMS | Yes | None | No | No |

| IEC 61850 | EMS ↔ Grid | GOOSE <1ms | Opt. TLS | Yes | Via mapping |

| DNP3 | EMS ↔ Utility | Low latency | SAv5 | N. America | No |

| OPC UA | EMS ↔ Cloud | Near RT | TLS | Emerging | Yes |

| MQTT | EMS ↔ Cloud | Streaming | Opt. TLS | No | Yes |

| IEEE 2030.5 | EMS ↔ Utility | REST poll | TLS mandatory | Yes | Possible |

| PROFINET/EtherNet-IP | BMS ↔ PCS | Deterministic | Network | No | No |

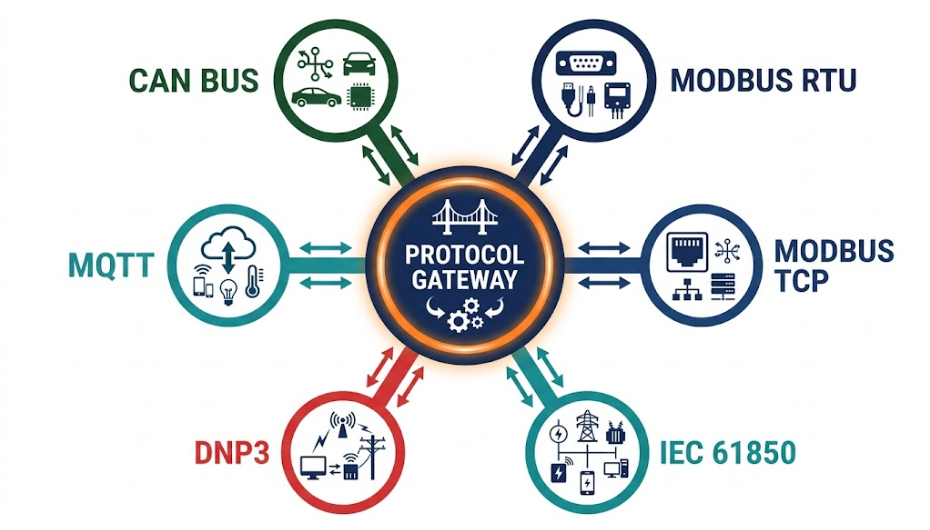

Why Every BESS Needs a Protocol Gateway

No BESS project uses just one communication protocol. CAN Bus batteries connect to Modbus inverters. Modbus inverters connect to IEC 61850 substations. DNP3 talks to SCADA. MQTT streams data to the cloud.

So a protocol gateway is what holds the whole system together. It translates data between protocols in real time.

What a BESS Protocol Gateway Does